Promise and peril

This is part two of Between wellness and medicine, a series of articles examining the growing market of consumer neurotechnology devices caught between medical and wellness regulation. Subscribe to receive part three and four via email (select “Neurotech”).

The consumerisation of neurotechnology: opportunity or risk?

In just a decade, the number of consumer neurotechnology companies grew from 41 in 2014 to 153 in 2024, surpassing the number of companies developing medical neurotechnologies. More than one-third (and more than half in the US) of these consumer companies target wellness applications, including sleep improvement, stress management, focus, burnout prevention, or mood and productivity enhancement using wearables. Consumer neurotechnologies extend the digital data ecosystem, building on existing infrastructures such as smartphones, the internet, social media, LLMs, and, increasingly, chatbots. These technologies are here to, in their manufacturers’ own words, “democratise brain health”.

Naturally, the main entry point for brain-sensing consumer neurotechnologies into wellness markets (a sector that has doubled in size since 2013[1]) is mental health. Mental health has been identified as one of the six areas of growth[2] in the wellness space in 2025, coinciding with the broader context of a mental health crisis[3] in the EU and beyond. This climate is likely to continue driving market growth for neurotechnologies, particularly given the limited[4] and uneven[5] support provided by healthcare systems, where global median public spending on mental health[6] accounted for just 2.1% of government health expenditure in 2021. At the same time, the pressure to perform and self-optimise is high, and particularly pronounced among younger populations, who spend disproportionately on wellness relative to their share of the population[7] and who also experience some of the worst mental health outcomes[8]. Neurotechnology companies have identified these needs and are increasingly positioning their products as solutions to mental health-adjacent problems – and they could indeed make a real impact.

This psychosocial and economic climate coincides with technological advances (such as the miniaturisation of sensors into wearables or advances in AI) that are critical for the widespread consumer adoption of neurotechnologies with more advanced capabilities. Applications of these tech advances are being explored by major Big Tech companies through internal R&D efforts or strategic investments, which means that neurotech could soon be integrated into the devices that we already use everyday. Embedding powerful capabilities into consumer form factors opens new avenues for remote monitoring, prevention, and self-care[9],[10] . For instance, earbuds or headphones with integrated EEG can now be used to warn of an increased risk of epileptic seizures[11], and recently a portable device[12] that uses transcranial direct current stimulation obtained FDA clearance to treat depression at home[13] after reporting successful clinical trials[14]. However, this technological capacity to “consumerise” medical devices – or, conversely, to “medicalise” consumer form factors inevitably blurs the line between consumer and medical applications, and amplifies the potential consequences of the regulatory grey zone between wellness and medicine.

When medical and consumer products start to look very similar, but the regulatory frameworks that govern them are very different, ambiguities can emerge, and commercial incentive structures can shift (see Introduction: Cases). The strict but structured medical pathway, contrasted with a more permissive yet complex and fragmented consumer market, can reshape market behaviour. For instance, practices such as misleading marketing without sufficient evidential backing (likely aiming for the credibility of the medical field without having to prove efficacy) have already been documented in wellness markets for other technologies, both in the context of the EU’s MDR[15] and the FDA[16] framework in the US. Often, these practices can be perfectly legal, but this does not mean they are necessarily without risks[17]. Hence, anticipating and analysing the prevalence of such permissible (yet nonetheless controversial) practices in the neurotechnology field is a prerequisite for the responsible development of the industry.

Against this backdrop, and building on the company database compiled for CFG’s previous publication, the Neurotech Consumer Market Atlas, CFG has analysed the market practices of nearly 160 consumer companies, of which 56 focus on wellness applications. The analysis focuses on:

- Marketing claims and language used on company websites and social media

- Scientific evidence cited in support of product claims

- Transparency regarding regulatory status and the presence or absence of medical certification

The collected evidence, discussed and contextualised through an expert roundtable and interviews with industry, academic, and policy experts, provides an empirical basis for CFG’s identification of the risks that shape, and in some cases constrain, the responsible deployment of consumer neurotechnologies.

What consumer neurotechnology could enable

Understanding the opportunities associated with wellness neurotechnologies helps clarify what is at stake in face of regulatory ambiguities and grey areas. Developed and deployed responsibly, consumer neurotechnologies hold particular promise across at least the three areas outlined below.

Continuous monitoring beyond the clinic

In order to appeal to a mass market, consumer devices are designed specifically with portability, wearability, and ease of use in mind, often with sleek form factors that can be used comfortably and unobtrusively. Similarly to other wearable technologies[18], this could enable continuous, large-scale monitoring outside the clinic, in normal day-to-day life[19]. This form of monitoring has shown healthcare relevance[20], but has until recently been difficult to support in neurology, as medical neurotechnologies tend to be bulky, non-portable and dependent on professional operation. Consumer-grade devices[21], even if they do not yet match medical-grade equipment[22] in signal quality, can nonetheless make a meaningful contribution by enabling continuous recording outside clinical settings. This gain in portability may benefit neuroscience research and medical innovation, where reliance on laboratory-based conditions, in which subjects remain still and respond to simulated environments, is a long-recognised limitation for understanding brain function in natural contexts[23].

One example of the clinical value of portability and wearability concerns sleep diagnostics. Sleep studies using EEG are typically conducted in specialised clinical settings, where patients often find it difficult to sleep. Wearables can be particularly valuable when measuring a condition in hospital settings is challenging, either because it is transient or because it depends on preconditions that are difficult to reproduce, such as sleeping in a clinic. Wearables can reduce the need for hospital visits and increase the likelihood of obtaining meaningful data. Sleep monitoring is therefore increasingly being explored for at-home use through wearable EEG by multiple companies[24].

Data at scale for the promotion of health

Data collected using neurotechnology wearables could be used to inform clinical applications, but one current limitation[25] of this approach is data availability. Widely adopted consumer neurotechnology wearables could help bridge this gap by providing longitudinal datasets at scale. When analysed using computational methods, including AI-based approaches, such data could help identify early indicators of neurological or psychiatric conditions[26] and promote healthy habits, much like how other consumer wearables[27] have advanced cardiovascular health tracking and prevention through step counts, heart rate variability, and blood pressure measurements. Health-promotion interventions based on wearable neurotechnologies remain largely unproven at scale, particularly when relying on current consumer devices, but even incremental improvements could have a substantial impact at a time when neurological disorders are rising[28].

Furthermore, baseline cognitive or neural biomarkers may help identify which individuals are more likely to respond[29] to specific treatments or interventions, improving treatment matching, reducing side effects, and promoting the efficient use of resources. Changes in baseline biomarkers could inform about pre-symptomatic disease and promote early intervention, which has been shown to reduce or slow down disease progression[30] in Alzheimer’s disease and depression[31], among others.

Last but not least, data from wearables can help contextualise the triggers of psychological struggles. One area where this potential is particularly salient concerns the relationship between digital technologies and mental health. It is widely acknowledged today that smartphone use is likely associated with adverse mental health outcomes in young people, and yet establishing a causal link to underpin this phenomenon has proven extremely difficult. Wearable neurotech embedded into everyday devices like headphones may allow to disentangle and empirically test these relationships — critical for both advancing research and shaping policies.

Value outside the clinic

Some wellness neurotechnologies already support everyday practices such as meditation, sleep, learning routines, or focus tracking. These functions do not need to be framed in medical terms to provide value: if advertising language remains proportionate and transparent, some of these devices can be understood simply as part of the broader repertoire of tools people use to feel better, focus, or cope with daily challenges, just as they might take up sport, attend a meditation class, improve sleep hygiene, or silence notifications to concentrate.

How neurotechnology is marketed to consumers

For low-risk devices, regulatory status as a medical or consumer device under the MDR or FDA frameworks is determined by claims about intended purpose. As a result, a wearable neurotechnology product with medical capabilities that is marketed using wellness language will not be considered a medical device under these frameworks, while a device without proven medical efficacy that makes medical claims will fall under medical regulatory scrutiny. Hence, independently of the underlying technology, the way these claims are framed by companies, including the wording used and the messages conveyed to consumers, plays a central role in shaping the regulatory grey zone.

To shed light on this issue, CFG examined the claims and language used across both webpages and selected social media channels of more than 150 consumer neurotechnology companies (i.e. those without medical clearance[32]), including firms with products on the market as well as those in development. The analysis uncovered several areas of concern.

Webpage and social media behavior in consumer markets

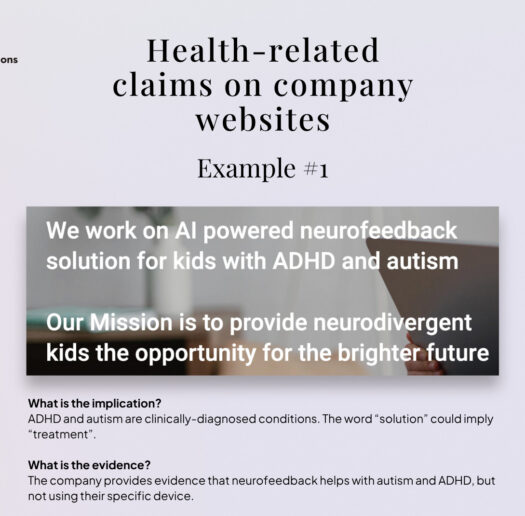

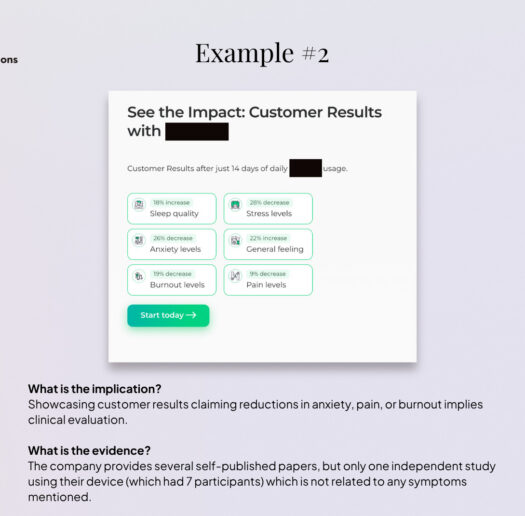

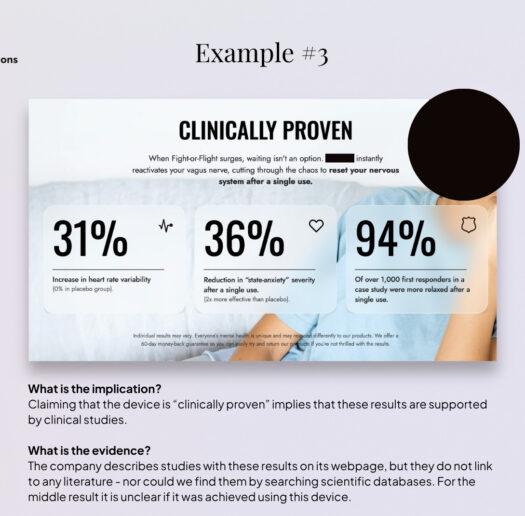

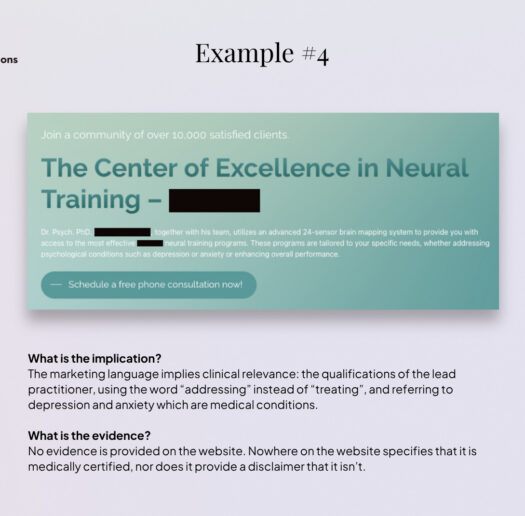

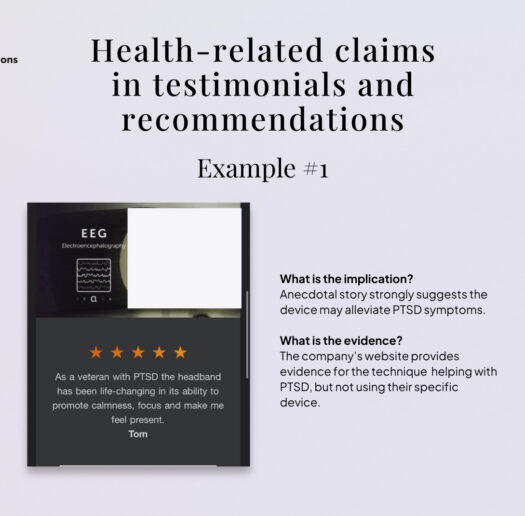

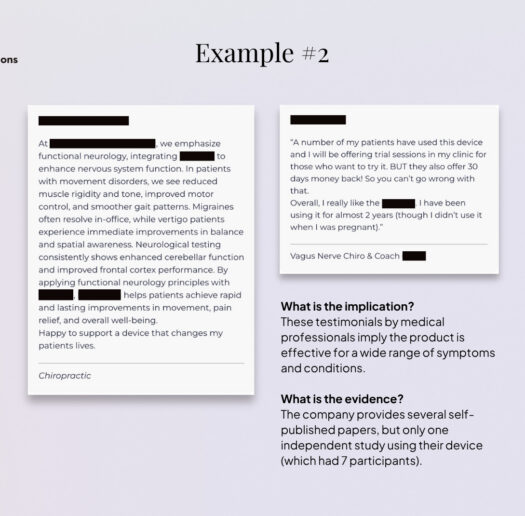

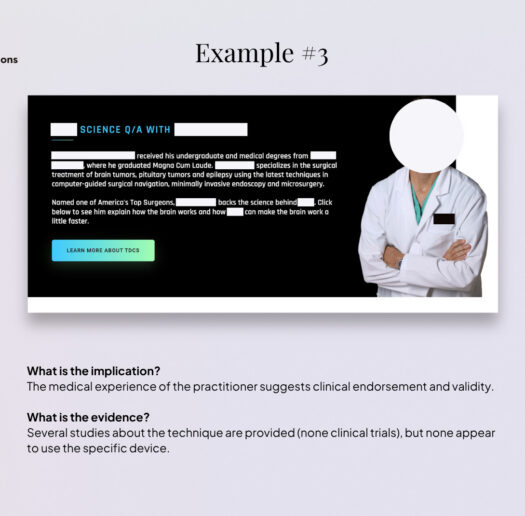

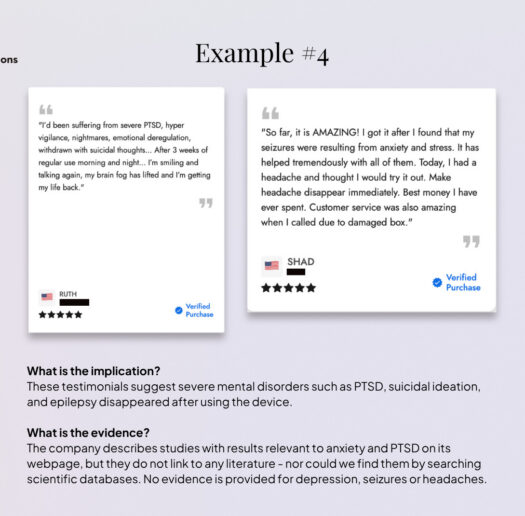

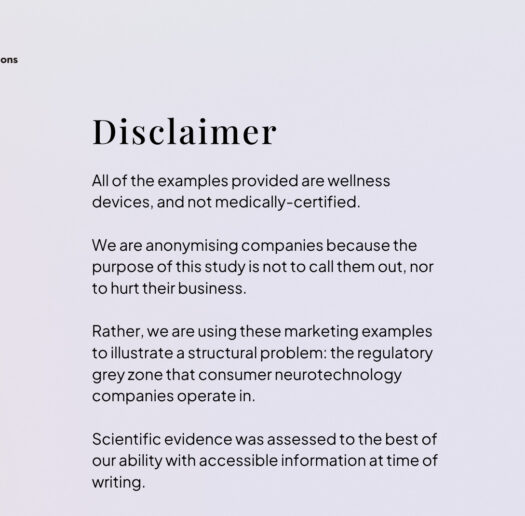

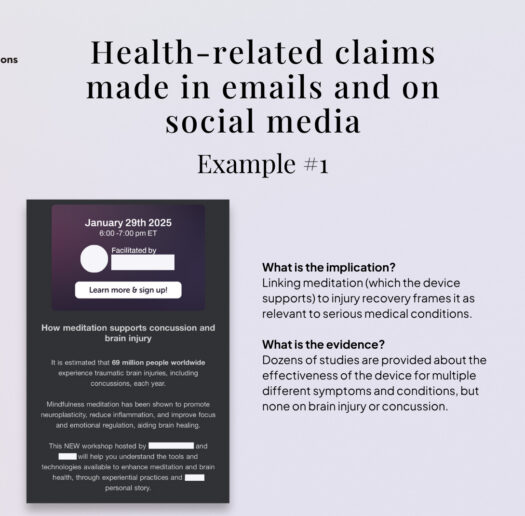

A selection of the most problematic or potentially misleading practices we found in consumer markets is compiled in the galleries of anonymised examples below[33] . Most of these practices (though not all) are not unlawful per se, as they rely on the language ambiguities that characterise the regulatory grey zone; at the same time, not all companies analysed engage in controversial practices. In fact, practices vary substantially across companies, and a significant share positions themselves clearly in the wellness space, avoiding reference or explicit medical language.

However, we did find that it is relatively common for companies to explicitly target symptoms that are also associated with neurological or psychiatric conditions and, by extension, to imply benefits for managing those conditions.

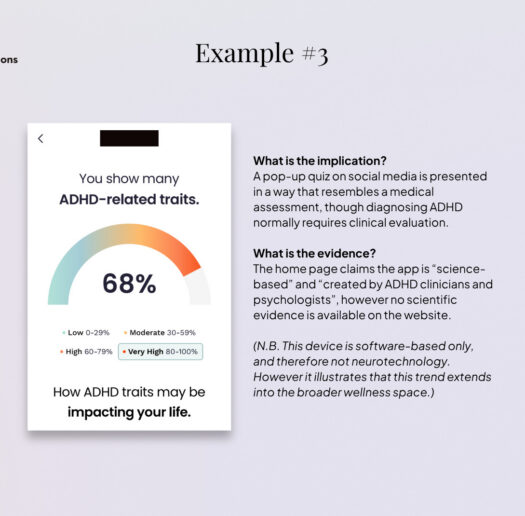

This pattern is particularly prevalent among devices marketed for stress reduction, mental clarity, or focus enhancement, which are frequently positioned as adjacent solutions for managing depression, burnout, anxiety, or ADHD, including through featured testimonials and recommendations by medical professionals. Other recurrent examples include references to pain, mood, sleep disorders, ADHD and autism.

Much of this activity unfolds in the absence of clear guidance on acceptable consumer-facing claims. Terms such as “help with,” “support,” “alleviate,” or “manage” are widely used and remain permissible in consumer markets precisely because they avoid the medical language of “diagnose” or “treat” that would trigger scrutiny under the MDR. But even if technically allowed, these nuances are unlikely to be easily parsed by consumers and may contribute to the perception that a device is medical when it is not. Current European regulation provides limited clarity on this issue, as these practices appear to have established space in the market – potentially expanding in parallel with market growth.

Marketing practices outside official websites raise additional concerns. Content disseminated through social media (e.g. LinkedIn, Instagram), subscription newsletters, interviews on YouTube, or webinars can be more aggressive than on official websites. Here, products are again associated – implicitly or explicitly- with alleviating symptoms of anxiety, depression, burnout, ADHD, or, in some cases, even brain injury, long COVID, or Alzheimer’s disease. Notably, even companies that otherwise tick the right boxes – positioning themselves clearly within the consumer space; possessing solid scientific backing – frequently post content linking their products to medical topics. One plausible explanation is that this trend is exacerbated by the ephemeral nature of some of this content (e.g. Instagram stories), which can shape consumer impressions while leaving limited traceability and reducing the likelihood of regulatory scrutiny.

In addition, across both company webpages and social media channels, we observe the use of medical aesthetics, such as individuals shown in lab coats, medical-style diagrams, tests, and diagnoses. Similar patterns extend across the broader wellness device sector and appear to be gaining momentum (and raising concerns) in software-based applications[34], particularly with the expansion of therapy chatbots[35], diagnostic apps[36], and health and wellness-oriented LLMs[37], as illustrated by the examples of online ADHD self-tests.

This combination of symptom-targeting claims, ambiguous language, and medical aesthetics is likely to shape consumer perceptions in ways that blur the boundary between wellness and medical use.

Grey zone marketing claims

As a complement to the qualitative analysis of language and claims, we examined the overall impression conveyed to consumers by the language used in this market. To that end, we conducted an LLM-based analysis of scraped website text to assess how manufacturers’ claims may be interpreted by consumers at first encounter (see Methodology). While this approach cannot substitute for consumer surveys, existing research[38],[39],[40],[41],[42] has examined the use of such models to simulate aspects of human perception and judgement, and it provides a structured proxy for first-impression interpretation.

Using this approach, we found that nearly 70% of neurotechnology firms without U.S. Food and Drug Administration (FDA) or Conformité Européenne (CE) clearance were nonetheless presented through their marketing language in ways that led the model to classify them as medical (45%) or borderline medical (25%), suggesting a substantial risk of consumer misperception regarding the nature and efficacy of certain products, and highlighting the need for further empirical research on consumer perception of these devices – ideally, through surveys.

What’s at stake: supplanting medical care

The marketing practices described pose risks to consumer interpretation and downstream behaviour, particularly (although not exclusively) through the more aggressive tactics and language employed in social media content. Symptom-targeting claims, ambiguous language, and medical aesthetics can undermine consumer perception (Figure 1) and purchasing decisions[43], particularly when companies are not consistently transparent about the absence of medical certification, as discussed later in this piece. For non-expert users, distinctions between wellness-oriented support and medical use are not always clear, increasing the likelihood of overestimating clinical relevance or efficacy.

If consumer devices are perceived as medical products, the risk of supplanting medical care becomes real. In addition, consumer neurotech offers an appealing alternative to healthcare: convenient, and in some cases, cheaper than psychological care. These devices can be purchased online, used from the comfort of home, and allow users to bypass the stigma that can be associated with seeking professional mental health support. Taken together, these factors raise the risk that serious conditions remain undiagnosed and untreated, or are only identified later, when severity[44] and chronicity[45]may be greater. This is the case, for example, in some forms of depression, psychosis[46], and generalized anxiety disorder[47] – conditions that are, in some cases, directly referenced in consumer neurotechnology marketing.

From claims to scientific evidence

Given the prevalence of ambiguous, medically adjacent claims in the consumer neurotechnology market, a key question is whether companies provide scientific evidence to substantiate these claims.

In the EU, consumer products are not legally required to provide evidence of efficacy, so long as they meet basic safety and data protection standards (General Data Protection Regulation (GDPR)[48], AI Act[49], etc.). As a result, unlike medical devices, consumer neurotechnologies are generally not subject to mandatory clinical testing or standardised efficacy benchmarks, such as clinical trials.

To understand how companies approach scientific validity in practice, CFG analysed the websites of consumer neurotechnology companies clearly dedicated to wellness applications, with products on the market, resulting in a cohort of 51 companies.

We systematically scanned company webpages for references to scientific evidence supporting product claims and found that 37.3% (n=19) do not provide any form of evidence to support their claims. Out of the remaining companies, 29.4% (n=15) cite evidence of principle (i.e., studies demonstrating the general benefits of neurofeedback or EEG tracking to improve ADHD symptoms or prevent burnout) but do not provide evidence relating to their specific device. Only 33.3% (n=17) of companies provide evidence relating to the efficacy of the device itself; however, the type and quality of this evidence are highly heterogeneous, ranging from extensive bibliographies of third-party studies (in some cases more than 200 peer-reviewed papers) to company-produced white papers based on small or non-representative samples[50].

These numbers show that a substantial share of consumer wellness neurotechnology products (37%) are marketed without evidential backing, which is notable given that medical language and aesthetics are common (see Gallery). The frequent substitution of evidence of principle for evidence of device efficacy (29% of cases) – a distinction that is rarely made explicit in consumer-facing communication – is also problematic. Claims supported by clinically supervised studies do not necessarily apply to consumer use, where devices are self-administered, unsupervised, used outside defined clinical protocols, and in many cases, built with fewer channels, lower quality materials, or used without correct placement and de-noising of the environment. For example, the fact that vagus nerve stimulation has demonstrated efficacy in improving the symptoms of anxiety in specific clinical contexts does not necessarily imply that a consumer device delivering low-level electrical stimulation at home will produce comparable effects; the same effect needs to be empirically demonstrated.

Some companies within the ecosystem voluntarily provide scientific evidence concerning the efficacy of their devices, and some are very well supported by peer-reviewed quality research. At the same time, the absence of clear standards defining what constitutes acceptable evidence in consumer markets allows a wide range of materials to be presented as “scientific support”. In other words, in the absence of standards, virtually any form of “scientific” reference can be presented in support of marketing narratives.

This results in substantial variability in both the quality and relevance of the evidence provided, with some companies referencing hundreds of peer-reviewed studies conducted by third-party researchers to assess the efficacy of their device independently, while others rely solely on internal white papers. It is worth noting that the strength of the evidence is not necessarily correlated with the medical framing of the claims, and further research could systematically examine the relationship between the medical framing of claims and the evidence standards applied.

What’s at stake: trust, reliability, and incentives

As some researchers have pointed out[51], these nods towards the medical field without proper validation or efficacy testing risk undermining trust in the field as a whole, including for medical applications, especially if users cannot distinguish between evidence-based tools and unproven claims or between what is a medical device and what is not. Devices that fail to deliver on their claims may affect public perception and harm responsible innovation in both consumer and medical markets, as some experts we interviewed noted has already occurred following earlier “mind-reading” and “telekynesis” hype that ultimately failed to deliver. In addition, this lack of clarity can make it difficult for policymakers, investors, the scientific and medical community, and crucially insurance companies involved in reimbursement schemes – a key incentive for pursuing medical certification of low-risk devices – to identify genuinely effective tools, and may ultimately erode trust in the field overall.

A further risk relates to the quality and reliability of the metrics used to infer mental or cognitive states provided by devices without scientific backing. Such feedback about mental state, whether accurate or not, can shape how users interpret their own experiences[52], particularly when subjective and complex mental states are quantified using simplified or novel metrics that lack proven accuracy. Evidence from other consumer wearables[53] suggests that misleading or false signals can negatively affect perceived well-being and confidence in symptom management, a risk that becomes tangible in the neurotechnology field in the absence of validation.

These concerns are further amplified when such inferred signals are translated into personalised insights, recommendations, or guidance through conversational interfaces, such as LLM-based chatbots. These platforms can increase perceived authority and trust[54] even in the absence of robust validation and may disproportionately influence how users interpret outputs such as their mental state, assess their health, or make clinical decisions. While most current applications are not designed for harmful intent, experts consulted for this analysis noted that such dynamics could, in some cases, facilitate forms of cognitive or emotional manipulation[55] by capitalising on users’ insecurities or dissatisfaction with their health, raising additional concerns for consumer protection and autonomy. Even though some chatbots, particularly those touching on mental health aspects, have already triggered legal investigations for potential deceptive trade practices[56], still, consumer companies are increasingly encouraging their use in health-related contexts[57].

Finally, the lack of legal obligation and, importantly, of incentives for wellness companies to test the efficacy of their products risks remaining in the dark about whether these devices could indeed meaningfully complement healthcare.

Disclosure of regulatory status

As discussed in previous sections, consumer neurotechnology companies frequently use medically adjacent claims and aesthetics, while the scientific evidence substantiating these claims is often limited or absent. This raises a further question: to what extent are companies transparent about the non-medical status of their products when they do not have medical clearance?

CFG analysed the webpages of 56 wellness-focused neurotechnology companies worldwide, including firms with products on the market as well as those in development. We systematically reviewed main webpages, privacy policies, and Terms and Conditions to identify disclaimers clarifying that products are consumer devices and not medically certified. For the purpose of this analysis, a disclaimer was considered a text similar to “[Device] is not intended to diagnose, treat, cure, or prevent any diseases”.

We found that 48.2% (n=27) of companies provide no disclaimer regarding the lack of medical certification, neither on the main webpage nor in policy documents or Terms and Conditions[58], 28.6% (n=16) include a disclaimer only in their Terms and Conditions or Terms of Use, and 23.2% (n=13) display a disclaimer on the main webpage, typically at the bottom of the page. Remarkably, 0% of companies present a clear disclaimer upon first access to the website.

It is worth noting that all companies based in the EU (with one exception) include disclaimers either at the end of the main webpage or within their Terms and Conditions. However, companies based outside the EU also frequently market and sell products in European markets.

In addition to medical certification, important safety warnings, such as potential interference with pacemakers or other medical devices (which is particularly relevant for devices delivering electrical currents for stimulation), are rarely visible at first glance but stated within Terms and Conditions or on leaflets provided only after buying the product.

What’s at stake: transparency and privacy

When products that use medically adjacent claims and aesthetics fail to clearly disclose their non-medical status, consumers may reasonably assume a level of clinical validation, oversight, or accountability that does not exist. Even where consumers approach such products cautiously, it is often unclear where this information should be located or how prominently it should be presented. Other sectors, such as food, rely on standardised labelling practices that clearly indicate critical information (e.g. expiration dates or ingredient composition). By contrast, information as consequential as medical certification lacks comparable standardisation in the neurotechnology sector, despite the fact that some claims verge on medical relevance without appropriate substantiation, as we have discussed.

These gaps compound a broader and well-documented set of concerns surrounding transparency in neurotechnology, particularly with respect to brain data and cognitive inferences[59], privacy[60], rights[61], and informed consent[62] – issues that have been widely discussed in ethics and law academic contexts. In practice, consumers are rarely clearly informed about how their data are collected, stored, shared, or used. Companies do not consistently disclose data practices on their websites [63] in a clear or accessible manner (and sometimes not at all), increasing the risk that deeply sensitive neural or behavioural data are collected, shared, or monetised without meaningful consumer consent or sufficient safeguards.

This opacity is particularly risky as the neurotechnology ecosystem becomes increasingly specialised, with different actors operating across hardware, software, analytics, and data layers. In this context, transparency around data-sharing (or data-selling) practices is likely to erode further, as new data flows, dependencies, and value chains emerge faster than shared disclosure norms or oversight mechanisms. This risk is amplified by the highly fragmented regulatory landscape for neurotechnology in Europe, which CFG has mapped in detail in previous work. Examples of emerging data dependencies include research use cases using wearables, where companies store and analyse participant data; licensing arrangements in which third parties receive and process user data; and software systems that analyse data collected through devices developed by other actors.

Without clearer transparency around medical status and data practices, efforts to foster an ethical and trustworthy consumer neurotechnology market are likely to remain limited.

Conclusion: structural frictions

The rapid diffusion of biometric wearables, brain-sensing devices, and AI-driven wellness applications into consumer markets demonstrates both strong demand and a growing willingness among individuals to experiment with tools promising improvements in mental well-being. As this analysis shows, the opportunities associated with consumer neurotechnologies are substantial. When developed and deployed responsibly, these tools could meaningfully complement healthcare systems, support prevention and self-care, and expand the empirical basis for neuroscience and mental health research.

At the same time, our findings highlight structural frictions in the current regulatory and market landscape. Ambiguous marketing claims, uneven evidentiary standards, and limited transparency around medical certification coexist within the same ecosystem as more cautious, scientifically grounded approaches. These dynamics rather reflect incentive structures and regulatory boundaries that have not kept pace with technological developments, namely, the persistence of a regulatory grey area where medical and consumer products are progressively harder to differentiate.

Current regulatory frameworks largely rely on a binary distinction between medical and non-medical devices, yet this distinction does no longer reflect the reality of current neurotechnologies, many of which operate in a space that is neither fully clinical nor purely recreational. As discussed throughout this report, the increasing blurring of boundaries between consumer and medical neurotechnologies carries concrete risks. These risks do not only affect consumers, but also companies seeking to operate responsibly, as well as healthcare systems exploring how consumer technologies might be safely integrated into care pathways. Even the development of medical applications that are currently the only option for patients with certain conditions could be affected by negative public perceptions of a consumer market that is not always fair and transparent.

Our research shows that key questions around acceptable claims, what constitutes evidence, or transparency obligations remain insufficiently addressed, particularly in the European context, where previous CFG work shows that neurotechnology governance is fragmented across multiple regulatory domains applicable to consumer markets. Greater regulatory cohesion in consumer markets could help set clearer expectations around claims, evidence, and transparency, thereby aligning incentives, supporting consumer trust, and enabling the responsible growth of the sector.

Building on how current regulatory structures shape these strategic trade-offs, the next brief in this series examines how companies navigate the choice between medical and consumer pathways in practice, and the challenges they face when making this decision.

Methodology

Company database

Company data was collected and analysed for CFG’s publication “The Neurotech Consumer Market Atlas”(see publication Methodology). It was sourced from the StartUs Insights Discovery Platform, and manually cross-referenced with company press releases, websites, PitchBook and Crunchbase. Analysis of company data was published already.

Data is available upon reasonable request and for non-commercial purposes. Please contact us for more information.

LLM-based perceptual classification of neurotechnology companies

Recent work shows that large-language models (LLMs) can serve as credible proxies for human judgement in simulated decision-making tasks[64] [65] [66] [67] [68]. Leveraging this capability, we tasked GPT-4o-mini with reading company webpages “as a lay consumer” and assigning each firm to one of three linguistic positions (consumer, borderline, or medical) solely on the basis of the wording used by the companies in their webpages. When contrasted with the companies’ verified regulatory status (e.g., FDA/CE clearance or explicit non-medical disclaimers), the resulting match–mismatch matrix provides an estimate of how marketing language may obscure true product classification. A high discordance rate suggests a potential risk to consumer rights and raises concerns about the transparency of industry communication practices.

-

Ground-truth labelling and mismatch rate. Each company was assigned a regulatory class prior to LLM analysis: medical if it already possessed or was actively pursuing formal clearance (FDA, CE, or equivalent) through authorised products, clinical trials, or dedicated fundraising; consumer if it neither held nor sought such clearance or explicitly disclaimed medical intent (e.g., in Terms and Conditions).

- Web-content acquisition. HTML for each company page was downloaded and parsed with BeautifulSoup. All <script>, <style>, and <noscript> elements were discarded, and the remaining visible text was concatenated into plain text for subsequent LLM analysis.

- LLM categorisation. Perceptual classification into medical, borderline or consumer was performed with GPT-4o-mini (temperature = 1.0) via the OpenAI API.

User prompt

You are a consumer tasked with categorizing companies based on their product offerings as presented on their websites.

Categories:

1. Consumer

2. Borderline/Gray Area

3. Medical

Analyze the following content from the website {url} and determine which category the company belongs to.

Pay attention to the overarching message that’s transmitted, not only to the caveats.

Use these curated lists of keywords to classify the company:

WELLNESS_TERMS: productivity, meditation, mindfulness, relaxation, sleep enhancement, focus, gaming, control external devices, entertainment, personal growth, inner peace, self-improvement, self-reflection, well-being, calmness, reduce tension, rejuvenation, self-care, mental clarity

BORDERLINE_MEDICAL_WELLNESS_TERMS: better sleep, burnout, stress management, stress, brain health, mental fog, fatigue relief, emotional support, boost mood, enhanced memory, reduce overwhelm, motivation support, mental resilience, reduce irritability, prevent exhaustion, nervous tension, brain training, mood regulation, emotional balance, fatigue, pain, exhaustion, brain fog, attention, attention deficit, hyperactivity, attention training, bloating, digestion, gastrointestinal discomfort

MEDICAL_TERMS: pain reduction, relieve pain, doctor approved, clinical trial, diagnosis, treatment, therapy, rehabilitation, medical-grade, clinically proven, symptom management, prescription, physical therapy, diagnostic test, FDA cleared, medical procedure, disease prevention, recovery program, medical intervention, therapeutic support, brain health, attention deficit, hyperactivity, irritable bowel syndrome, depression, ADHD, implantable, intracranial, patient, disease, syndrome, anxiety

You MUST provide only the category name.

- Assessment of model variability. To assess stochastic variation, classification was repeated 5 times per company. The modal category across the five replicates was adopted as the final perceptual label.

- Perceptual-regulatory mismatch. The LLM-derived perceptual category for every company was then compared directly with its regulatory class, and the percentage of disagreements was calculated as a proxy measure of potentially misleading or ambiguous marketing language.

[1] Global Wellness Institute. (2024). The global wellness economy reaches a new peak of $6.3 trillion–and is forecast to hit $9 trillion by 2028. https://globalwellnessinstitute.org/press-room/press-releases/the-global-wellness-economy-reaches-a-new-peak-of-6-3-trillion-and-is-forecast-to-hit-9-trillion-by-2028/

[2] McKinsey & Company. (2025). The Future of Wellness trends survey 2024. https://www.mckinsey.com/industries/consumer-packaged-goods/our-insights/future-of-wellness-trends

[3] European Commission. (2023). Mental health – October 2023. Eurobarometer. https://europa.eu/eurobarometer/surveys/detail/3032

[4] European Commission. (2023). Mental health – October 2023. Eurobarometer. https://europa.eu/eurobarometer/surveys/detail/3032

[5] European Psychiatric Association. (2025). Socioeconomic inequalities drive significant gaps in access to mental health care across the European Union [Press release]. https://www.europsy.net/app/uploads/2025/04/Socioeconomic-Inequalities-Drive-Significant-Gaps-in-Access-to-Mental-Health-Care-Across-the-EU.pdf

[6] World Health Organization. (2025). Mental health atlas 2024. https://www.who.int/publications/i/item/9789240114487

[7] McKinsey & Company. (2025). The Future of Wellness trends survey 2024. https://www.mckinsey.com/industries/consumer-packaged-goods/our-insights/future-of-wellness-trends

[8] World Health Organization Regional Office for Europe. (2025). Child and youth mental health in the WHO European Region: Status and actions to strengthen the quality of care. https://www.who.int/europe/publications/i/item/WHO-EURO-2025-12824-52598-81473

[9] Wall, C., et al. (2023). Beyond the clinic: The rise of wearables and smartphones in decentralising healthcare. npj Digital Medicine, 6, 219. https://doi.org/10.1038/s41746-023-00971-z

[10] Matias, I., et al. (2026). Digital biomarkers for brain health: Passive and continuous assessment from wearable sensors. npj Digital Medicine. https://doi.org/10.1038/s41746-026-02340-y

[11] Joyner, M., et al. (2024). Using a standalone ear-EEG device for focal-onset seizure detection. Bioelectronic Medicine, 10(1), 4. https://doi.org/10.1186/s42234-023-00135-0

[12] Flow Neuroscience. (Retrieved March, 2026). Medically certified depression treatment at home. https://www.flowneuroscience.com/

[13] Bikson, M. et al. (2026).US FDA approves home-delivered tDCS for treating depression. https://doi.org/10.1016/j.brs.2025.103021

[14] Woodham, R. D., et al. (2024). Home‑based transcranial direct current stimulation treatment for major depressive disorder: A fully remote phase 2 randomized sham‑controlled trial. Nature Medicine, 31(1), 87–95. https://doi.org/10.1038/s41591-024-03305-y

[15] Svempe, L. (2025). The regulatory landscape of health apps in the European Union. Journal of Intellectual Property, Information Technology and E‑Commerce Law, 16(1), 24–34. https://www.jipitec.eu/jipitec/article/view/419

[16] Simon, DA,., et al. (2022). Skating the line between general wellness products and regulated devices: strategies and implications, Journal of Law and the Biosciences, Volume 9, Issue 2, https://doi.org/10.1093/jlb/lsac015

[17] In this brief we mainly focus on practices and risks that affect consumers, while we unpack companies’ perspectives and the reasons that lead them toward choosing the medical or consumer path in a follow-up brief in this series.

[18] Smuck, M., et al. (2021). The emerging clinical role of wearables: factors for successful implementation in healthcare. npj Digit. Med. 4, 45. https://doi.org/10.1038/s41746-021-00418-3

[19] Wall, C., et al. (2023). Beyond the clinic: The rise of wearables and smartphones in decentralising healthcare. npj Digital Medicine, 6, 219. https://doi.org/10.1038/s41746-023-00971-z

[20] Hariharan, U., et al. (2021). Smart Wearable Devices for Remote Patient Monitoring in Healthcare 4.0.Internet of Medical Things. Internet of Things. Springer, Cham. https://doi.org/10.1007/978-3-030-63937-2_7

[21] Ramses, A., et al. (2021), bioRxiv 2021.06.21.448991; doi: https://doi.org/10.1101/2021.06.21.448991

[22] Ratti E, et al. (2017) Comparison of Medical and Consumer Wireless EEG Systems for Use in Clinical Trials. Front. Hum. Neurosci. 11:398. doi: 10.3389/fnhum.2017.00398

[23] Stangl, M., Maoz, S.L. & Suthana, N. Mobile cognition: imaging the human brain in the ‘real world’. Nat Rev Neurosci 24, 347–362 (2023). https://doi.org/10.1038/s41583-023-00692-y

[24] InteraXon Inc. (Retrieved March 2026). Muse®. https://www.choosemuse.com/

[25] Huang, J., et al. ( 2022). “Applying Artificial Intelligence to Wearable Sensor Data to Diagnose and Predict Cardiovascular Disease: A Review” Sensors 22, no. 20: 002. https://doi.org/10.3390/s22208002

[26] Dubois, J., et al. (2025) A functional neuroimaging biomarker of mild cognitive impairment using TD-fNIRS. npj Dement. 1, 14. https://doi.org/10.1038/s44400-025-00018-y

[27] Apple Inc. (2025). Hypertension Notification Feature on Apple Watch: Validation paper. https://www.apple.com/health/pdf/Hypertension_Notifications_Validation_Paper_September_2025.pdf

[28] Lei, J., & Gillespie, K. (2024). Projected global burden of brain disorders through 2050 (P7‑15.001). Neurology, 102(17_Supplement_1), 3234. https://doi.org/10.1212/WNL.0000000000205009

[29]Chelsea G. Cox, et al. (2024) Alzheimer’s Disease Biomarker Decision-Making among Patients with Mild Cognitive Impairment and Their Care Partners, The Journal of Prevention of Alzheimer’s Disease, Volume 11, Issue 2, Pages 285-293, ISSN 2274 5807. https://doi.org/10.14283/jpad.2024.10.

[30] van Dyck, C. H., et al. (2022). Lecanemab in early Alzheimer’s disease. New England Journal of Medicine, 388(1), 9–21. https://doi.org/10.1056/NEJMoa2212948

[31] Hung C-I, et al. (2017) Untreated duration predicted the severity of depression at the two-year follow-up point. PLoS ONE 12(9): e0185119. https://doi.org/10.1371/journal.pone.0185119

[32] U.S. Food and Drug Administration (FDA) or Conformité Européenne (CE) medical clearance, or similar frameworks.

[33] Our purpose is not to single out individual companies but to highlight sector-wide patterns that consistently emerge from the current regulatory and technological context.

[34] Kopka, M., von Kalckreuth, N. & Feufel, M.A. Accuracy of online symptom assessment applications, large language models, and laypeople for self–triage decisions. npj Digit. Med. 8, 178 (2025). https://doi.org/10.1038/s41746-025-01566-6

[35] Gabriels, K. & Goffin, K. (2026). Therapy chatbots and emotional complexity: do therapy chatbots really empathise?, Current Opinion in Psychology, Volume 68, 102263, ISSN 2352-250X, https://doi.org/10.1016/j.copsyc.2025.102263.

[36] Riboli-Sasco, E.,et al. (2023). Triage and diagnostic accuracy of online symptom checkers: Systematic review. Journal of Medical Internet Research, 25, e43803. https://doi.org/10.2196/43803

[37] OpenAI. (Accessed March, 2026). Introducing ChatGPT health. https://openai.com/index/introducing-chatgpt-health/

[38] Lu, Y., et al. (2025). UXAgent: An LLM-agent-based usability testing framework for web design. In Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’25, Yokohama, Japan, 26 April–1 May 2025). Association for Computing Machinery. https://doi.org/10.1145/3706599.3719729

[39] Wang, L., et al. (2024). A survey on large language model based autonomous agents. Frontiers of Computer Science, 18, Article 186345. https://doi.org/10.1007/s11704-024-40231-1

[40] Gurcan, O. (2024). LLM-augmented agent-based modelling for social simulations: Challenges and opportunities. arXiv. https://doi.org/10.48550/arXiv.2405.06700

[41] Park, J. S., et al. (2024). Generative agent simulations of 1,000 people. arXiv. https://doi.org/10.48550/arXiv.2411.10109

[42] Bougie, N., & Watanabe, N. (2025). SimUSER: Simulating user behavior with large language models for recommender system evaluation. arXiv. https://doi.org/10.48550/arXiv.2504.12722

[43] Coates McCall, I., et al. (2019). Owning ethical innovation: Claims about commercial wearable brain technologies. Neuron, 102(4), 728–731. https://doi.org/10.1016/j.neuron.2019.03.026

[44] Hung C-I, et al. (2017) Untreated duration predicted the severity of depression at the two-year follow-up point. PLoS ONE 12(9): e0185119. https://doi.org/10.1371/journal.pone.0185119

[45] Martin-Key, N.A., et al. (2021).The Current State and Diagnostic Accuracy of Digital Mental Health Assessment Tools for Psychiatric Disorders: Protocol for a Systematic Review and Meta-analysis, JMIR Research Protocols, Volume 10, Issue 1, ISSN 1929-0748, https://doi.org/10.2196/25382.

[46] Marshall, M. et al. (2005). Association between duration of untreated psychosis and outcome in cohorts of first-episode patients: a systematic review. Archives of general psychiatry vol. 62,9, 975-83. https://doi.org/10.1001/archpsyc.62.9.975

[47] Altamura, A. Carlo, et al. (2008). Duration of Untreated Illness as a Predictor of Treatment Response and Clinical Course in Generalized Anxiety Disorder. CNS Spectrums 13, no. 5, 415–22. https://doi.org/10.1017/S1092852900016588.

[48] European Union. (2016). Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation). https://eur-lex.europa.eu/eli/reg/2016/679/oj

[49] European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act). https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32024R1689

[50] White papers that only show results and not methodological approaches have not been considered as evidence of the device.

[51] Wexler, A., & Thibault, R. T. (2018). Mind-reading or misleading? Assessing direct-to-consumer electroencephalography (EEG) devices marketed for wellness and their ethical and regulatory implications. Journal of Cognitive Enhancement. https://doi.org/10.1007/s41465-018-0091-2

[52] Fairclough, S. (2023). Neuroadaptive Technology and the Self: a Postphenomenological Perspective. Philos. Technol. 36, 30. https://doi.org/10.1007/s13347-023-00636-5

[53] Tran, K. V., et al. (2023). False Atrial Fibrillation Alerts from Smartwatches are Associated with Decreased Perceived Physical Well-being and Confidence in Chronic Symptoms Management. Cardiology and cardiovascular medicine, 7(2), 97–107. https://doi.org/10.26502/fccm.92920314

[54] Simms, C. (2025). AI is more persuasive than people in online debates. Nature. https://doi.org/10.1038/d41586-025-01599-7

[55] Vinay, R., et al. (2025). Emotional prompting amplifies disinformation generation in AI large language models. Frontiers in Artificial Intelligence, 8, 1543603. https://doi.org/10.3389/frai.2025.1543603

[56] Financial Times. (2025). Meta and Character.ai probed over touting AI mental health advice to children. https://www.ft.com/content/b50dab72-49ff-4a09-95f1-26a85267c02e

[57] OpenAI. (2025). Introducing GPT-5 [Video]. YouTube. https://www.youtube.com/live/0Uu_VJeVVfo?si=6Db9HKeOwKN0K24E&t=2070

[58]While it is possible that disclaimers are presented only after purchase, such practices would raise concerns regarding fair and informed consumer choice.

[59] Magee, P., et al. (2024). Beyond neural data: Cognitive biometrics and mental privacy. Neuron, 112(18), 3017–3028. https://doi.org/10.1016/j.neuron.2024.09.004

[60] Farahany, N. A. (2023). The battle for your brain: Defending the right to think freely in the age of neurotechnology. St. Martin’s Press.

[61] Ligthart, S., et al. (2023). Minding Rights: Mapping Ethical and Legal Foundations of ‘Neurorights.’ Cambridge Quarterly of Healthcare Ethics 32, no. 4, 461–81. https://doi.org/10.1017/S0963180123000245.

[62] Rivas Velarde, M.C., et al. (2024) Consent as a compositional act – a framework that provides clarity for the retention and use of data. Philos Ethics Humanit Med 19, 2. https://doi.org/10.1186/s13010-024-00152-0

[63] Genser, J., et al. (2024). Safeguarding brain data: Assessing the privacy practices of consumer neurotechnology companies Neurorights Foundation. https://perseus-strategies.com/wp-content/uploads/FINAL_Consumer_Neurotechnology_Report_Neurorights_Foundation_April-1.pdf

[64] Lu, Y., et al. (2025). UXAgent: An LLM-agent-based usability testing framework for web design. In Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’25, Yokohama, Japan, 26 April–1 May 2025). Association for Computing Machinery. https://doi.org/10.1145/3706599.3719729

[65] Wang, L., et al. (2024). A survey on large language model based autonomous agents. Frontiers of Computer Science, 18, Article 186345. https://doi.org/10.1007/s11704-024-40231-1

[66] Gurcan, O. (2024). LLM-augmented agent-based modelling for social simulations: Challenges and opportunities. arXiv. https://doi.org/10.48550/arXiv.2405.06700

[67] Park, J. S., et al. (2024). Generative agent simulations of 1,000 people. arXiv. https://doi.org/10.48550/arXiv.2411.10109

[68] Bougie, N., & Watanabe, N. (2025). SimUSER: Simulating user behavior with large language models for recommender system evaluation. arXiv. https://doi.org/10.48550/arXiv.2504.12722