Response to the European Open Digital Ecosystem Strategy

This submission focuses on open-weight AI, i.e. systems whose parameters are publicly available for download and adaptation, rather than open-source technologies broadly. We draw this distinction because open-weight AI presents governance challenges that traditional open-source software does not: once model weights are released, they cannot be recalled, safety guardrails can be removed with minimal effort, and thousands of safety-stripped variants already circulate freely. Simply transplanting broader open-source policy arguments to advance AI models could undermine the specialised governance these systems require.

The geopolitical context makes this urgent. Today’s open-weight landscape is dominated by Chinese models (DeepSeek, Qwen), while American frontier systems (OpenAI, Anthropic, Google) remain closed. While Europe’s Mistral also has open-weight general-purpose models, it does not compete at the frontier. Any strategy framing open source as a path to European sovereignty must reckon with the fact that uncritical adoption of Chinese open-weight models simply substitutes one dependency for another. Meanwhile, Europe’s own position is precarious: it hosts only 5–6% of global AI compute versus roughly 75% in the US, faces acute shortages of advanced AI engineers, and captures just 6% of global AI startup funding.

We argue that Europe needs a dual-track strategy. The first track builds societal resilience: the safety infrastructure, governance standards, and defensive capabilities needed to manage misuse risks from powerful AI models Europe does not control. The second track reduces strategic dependence: directing the EU’s approximately €2 trillion in annual public procurement toward European open-weight developers to build a sustainable domestic ecosystem, without relying on direct subsidies. Neither track works alone. Resilience without alternatives means permanent dependency; alternatives without resilience risk a single misuse incident discrediting openness altogether.

Concretely, this means developing tiered release standards where openness is granted in proportion to demonstrated safety, with AI Safety and Security Institutes (AISIs) leading risk threshold development. It means sustained investment in technical safeguards — tamper-resistant architectures, machine unlearning, and restricted-access channels for frontier models. It means context-aware procurement that prioritises European open-weight solutions where they offer clear sovereignty benefits — cybersecurity, public services, SME adoption, research — without mandating openness where closed models remain materially superior. And it means societal preparedness: AI-specific incident monitoring, cross-border threat intelligence, and targeted support that raises the floor of resilience for businesses and critical infrastructure operators alike.

The window for establishing governance frameworks that enable safe openness is narrowing as model capabilities advance. Openness and safety are not mutually exclusive, but pairing them requires deliberate investment in both tracks, starting now.

Introduction

Open-source digital technologies present paramount potential for strengthening European digital sovereignty by enabling local hosting, independent auditing, and modification without dependence on foreign providers.

This submission argues that Europe needs a dual-track strategy for open-source artificial intelligence. The first track focuses on building societal resilience: developing the safety infrastructure, governance standards, and defensive capabilities needed to manage risks from powerful AI models that Europe does not control. The second track focuses on reducing dependence over time: strategically directing public procurement toward European open-weight developers to build a sustainable domestic ecosystem. Neither track alone is sufficient. Resilience without alternatives leaves Europe permanently dependent on foreign providers; alternatives without resilience risk undermining public trust in openness itself. The path forward is not to choose between openness and safety, but to pursue both in parallel.

We focus specifically on open-weight advanced artificial intelligence rather than open-source technologies broadly. The term “open-weight” describes AI systems whose parameters are publicly available for download and adaptation, and which presents with distinctive governance challenges. Unlike traditional open-source software, where openness generally enhances security through community review, open-weight AI models enable users to remove safety guardrails with minimal effort, creating risks that cannot be recalled once weights are released. We use “open-weight” rather than “open-source” throughout to reflect this distinction.

The current geopolitical reality complicates any straightforward advocacy for openness. Today’s landscape is defined by American closed-source models and Chinese open-weight models. DeepSeek and Alibaba’s Qwen series now dominate global open-weight adoption, while frontier American systems from OpenAI, Anthropic, and Google remain closed.[1] Any strategy based on open source as a path to European sovereignty must reckon with the fact that uncritical adoption of Chinese open-weight models simply substitutes one dependency for another. Europe needs alternatives to both American closed source models, and Chinese open weight models’ dependence.

Open-weight AI models sit on a knife’s edge. Handled well, they expand access and drive innovation; handled poorly, one serious misuse incident could trigger restrictive responses that endanger openness altogether.[3] The nuclear sector offers a cautionary precedent: safety incidents did not merely harm individuals but fundamentally reshaped public acceptance and regulatory frameworks for decades. For open-weight AI to deliver on its promise, safety cannot be an afterthought. This submission therefore argues that realising the sovereignty benefits of open-source AI requires parallel investment in safety infrastructure, governance standards, and societal preparedness.

The Current Landscape

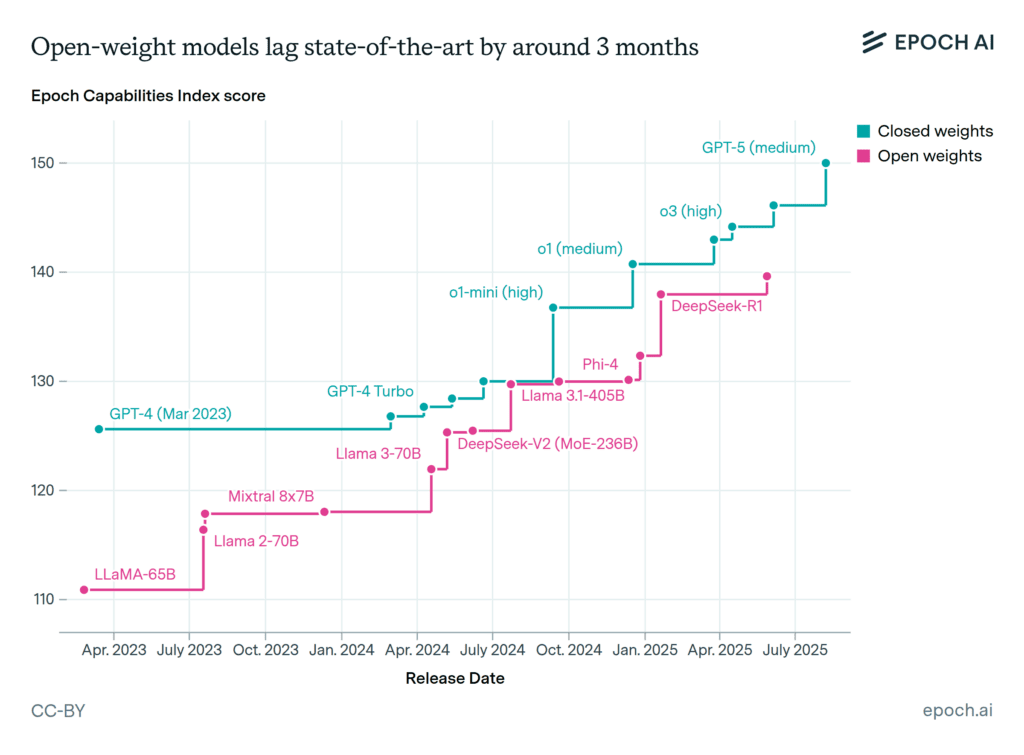

The capability gap between open-weight and closed models has narrowed substantially. According to Epoch AI’s analysis using their Capabilities Index, frontier open-weight models now lag behind the most capable closed models by approximately three months on average (see Figure 1).[12]

However, raw capability is only part of the picture. The competitive advantage of leading AI providers increasingly lies not in model weights but in the full stack around them: deployment infrastructure, safety tooling, enterprise compliance frameworks, and the technical capacity to iterate rapidly on all of these. Open-weight models offer none of this by default. For most European organisations, adopting open-weight AI means either building this stack internally –requiring ML engineering talent that Europe already struggles to retain– or accepting a patchwork of third-party solutions that rarely match the integrated experience of closed providers.

Presently, China has captured global leadership in open-weight development. Nearly all leading Chinese AI models are released with open weights, while American frontier models remain closed. Singapore’s national AI programme now builds on Alibaba’s Qwen; Huawei markets DeepSeek integration across African markets. Even American companies increasingly adopt Chinese open-weight models for downstream applications.

It should be noted of course that Meta and Mistral also release open-weight models, but Meta releases selected models with open weights while retaining its most advanced systems, its strategic interest lying in commoditising capabilities to undercut closed competitors. On the other hand Mistral, Europe’s leading frontier AI developer, operates on a similar model of open release versus proprietary models, but its models currently lag 6-12 months behind leading US and Chinese systems on standard benchmarks, while costing more per token [35]. Betting on any one single model provider that is substantially behind the frontier would concentrate strategic risk; a diversified ecosystem of European developers offers more robust foundations for sovereignty.

Europe’s position in this landscape is precarious. The EU hosts approximately 5-6% of global AI compute capacity, compared to roughly 75% in the United States.[14] The State of AI Report 2025 notes that US compute capacity is seventeen times that of the EU.[15]

Beyond infrastructure, Europe faces an acute shortage of advanced AI engineers—the researchers capable of developing cutting-edge models—with fewer than 0.63 qualified candidates per vacancy for these positions. While Europe trains substantial AI talent, it loses its best researchers to the US, and what remains is fragmented across many smaller hubs rather than concentrated in the ecosystems that drive breakthrough development.[35]

These technical disadvantages are compounded by structural challenges. Fragmented capital markets prevent European AI companies from scaling: only 6% of global AI startup funding in the first half of 2024 went to EU-based companies. Consensus-based decision-making creates systematic delays relative to more centralised competitors. The lack of a true single market for AI deployment—with 27 different healthcare data regimes, education technology standards, and public procurement processes—forces companies to engage selectively with individual Member States rather than scaling across Europe.[35]

European institutions have begun responding to these challenges. The InvestAI initiative aims to mobilise €200 billion in AI investment; the EuroHPC programme operates 19 AI Factories; consortia like OpenEuroLLM are developing compliant multilingual foundation models.[16]; and the recently announced Frontier AI Initiative will pursue sovereign frontier model development.[36]

Yet the current funding architecture has a notable gap: substantial resources flow to compute infrastructure and model training, but comparatively little to societal resilience measures that would allow Europe to benefit from advanced AI –including the models it does not control– without unacceptable risk. Addressing this imbalance through Horizon Europe and national R&D programmes would complement the competitiveness agenda with the preparedness track this submission argues is essential.

The Value Proposition Conditional on Safety, and Governance Challenges

The economic case for open source is substantial. A 2021 European Commission study found that EU companies invested approximately €1 billion in open-source software in 2018, generating an economic impact of between €65 and €95 billion on the European economy.[20] Econometric research estimates that if no country contributed to Open-Source Software development, GDP for the average country would be 2.2% lower in the long run.[21] For AI specifically, open-weight models enable startups, researchers, and public institutions to access capabilities that would otherwise remain locked behind API paywalls. Independent researchers can study and stress-test systems directly. Domain-specific fine-tuning becomes possible without negotiating commercial arrangements with foreign providers.[2] GitHub’s 2024 annual report documented over 150,000 generative AI projects, representing 98% growth year-on-year, with particular acceleration among contributors from emerging economies.[22]

However, these benefits must be weighed against a fundamental governance challenge: once an open-weight model is released, it cannot be recalled. Copies proliferate across mirrors, torrents, and peer-to-peer networks. Fine-tuning to remove safety guardrails requires minimal expertise or cost.[9] On Hugging Face, thousands of “abliterated” versions of advanced models, with safety measures deliberately stripped, are already freely available.[3]

The International AI Safety Report (often described as the IPCC Report for AI) warned that open-weight models can pose risks “by facilitating malicious or misguided use that is difficult or impossible for the developer of the model to monitor or mitigate,” noting that once weights are publicly available, “there is no way to implement a wholesale rollback of all existing copies or ensure that all existing copies receive safety updates.”[24] The domains of concern are not abstract: cybersecurity researchers have documented purpose-built “malicious LLMs” and jailbroken general-purpose models assisting cybercrime operations; biosecurity experts warn that capable models could lower barriers to acquiring dangerous pathogens. Recent research demonstrates that even well-meaning users can accidentally weaken safety systems during fine-tuning, with standard evaluations failing to detect such regressions.[25]

Currently, no commonly adopted industry standard exists for assessing under what conditions advanced AI models should be released as open-weight.[2] The decision rests solely on developer judgment, with no shared safety thresholds or verification requirements. When OpenAI delayed its open-weight release for additional safety testing, it applied internal frameworks without external oversight. DeepSeek and Alibaba followed with comparable models but presented no equivalent evaluations. Today, a model considered dangerous for open release by one company can be freely published by another.[3]

This governance gap extends to post-release monitoring. Licensing terms attempting to prohibit misuse face significant enforcement challenges. When Meta’s open-weight models were allegedly used for military applications despite explicit licence prohibitions, it demonstrated that legal protections offer little practical security once weights become publicly available.[26]

Industry perspectives on open source remain divided. Many smaller firms cite intellectual property protection as essential to commercialisation, viewing open-source-by-design as a threat to business models. Cybersecurity communities warn that decentralised development can reduce oversight and increase vulnerabilities.[27] These tensions suggest that blanket open-source requirements would face sustained resistance.

Cybersecurity offers a concrete illustration of why both openness and resilience matter. On one hand, AI-powered defensive tools –automated anomaly detection, rapid vulnerability patching, threat intelligence synthesis– represent a critical frontier for European security. Open-weight models enable European institutions to deploy such capabilities under domestic control, without routing sensitive security data through foreign providers. On the other hand, the same capabilities that enable defence can enable attack. Anthropic recently uncovered a large espionage campaign in which attackers used AI agents to infiltrate major global targets.[37] Open-weight releases of capable models necessarily provide these capabilities to adversaries as well as defenders.

The path forward is not to choose between openness and safety, but to pursue both in parallel. This means investing in technical safeguards that make open-weight models harder to misuse—tamper-resistant architectures, machine unlearning techniques, structured access tiers. It also means investing in societal resilience: hardening critical infrastructure against AI-enabled threats, building incident monitoring capabilities, and ensuring that defensive applications of AI outpace offensive ones. Without the first track, open-weight releases pose unmanaged risks; without the second, even perfect model-level safety cannot protect against capable adversaries using foreign or legacy systems.

Open-weight innovation is only as inclusive as its safeguards. Without deliberate investment in safety, accessibility risks turning into exposure.[2] We therefore argue for a framing that holds both imperatives together: we need open source for resilience, but we also need resilience for open source.

Concrete Measures

Standards and Evaluation Frameworks

We recommend development of tiered release standards where openness is granted in proportion to demonstrated safety. Rather than treating open-versus-closed as binary, such frameworks would establish clear thresholds: models below certain capability, domain, and deployment setting risk levels face minimal restrictions; more advanced systems require progressively stronger evidence that risks can be effectively mitigated.[2]

AI Safety and Security Institutes are uniquely positioned to lead development of risk thresholds and testing methodologies. The UK AI Security Institute’s safety case approach provides a template: structured frameworks combining precise safety claims, supporting evidence, and logical arguments.[28] The EU AI Office’s emerging network of model evaluators could extend beyond regulatory enforcement to develop specific frameworks for open-weight releases.

Domain experts in areas where misuse risk is acute, such as critical infrastructure and biosecurity, should be embedded in AI safety governance. The Organisation for the Prohibition of Chemical Weapons maintains a Scientific Advisory Board that routinely evaluates how new technologies affect treaty compliance.[29] Similar structures within AI Safety and Security Institutes could ensure evaluations remain grounded in evolving technical realities.

Technical Safeguards

Investment in technical safeguards for open-weight models is underfunded relative to need. Promising approaches include tamper-resistant architectures that resist removal of safety measures even when weights are modified, and machine unlearning techniques that selectively erase high-risk capabilities while preserving useful functions.[2] These remain early-stage, and there is no guarantee they will prove robust against determined adversaries. But the potential payoff justifies sustained research investment even under uncertainty.

For frontier models that exceed defined safety thresholds yet offer clear research value, restricted access channels offer a middle ground. Verified-access programmes could grant downloads only to credentialed researchers, drawing from practices in biomedical data repositories like dbGaP or UK Biobank.[30] Sandboxed environments allow researchers to interact with models without accessing raw weights, enabling meaningful experimentation while maintaining security boundaries.

Enterprise-grade safety tools like Protect AI’s Guardian platform demonstrate commercial viability in this space. Such tools scan models for architectural backdoors and runtime exploits, providing defence layers for organisations deploying open-weight models in critical applications.[31] Support for scaling such technologies serves both safety and European industrial objectives.

Public Procurement and Sectoral Priorities

EU public procurement represents approximately €2 trillion annually.[32] Strategically deployed, procurement can advance both tracks of the strategy outlined above: it builds resilience by accelerating adoption of AI-powered tools under European control, while simultaneously creating the market demand that sustains European open-weight developers commercially – without requiring direct subsidies.

Purchasing open-source technology from European developers like Mistral, as well as smaller firms that might not otherwise achieve commercial viability, builds a diversified ecosystem more robust than dependence on any single provider. This logic should extend to compute allocation: applicants to AI Factories proposing open-source approaches could receive preferential treatment in resource allocation, creating incentives throughout the development pipeline.

AI Safety and Security Institutes offer another lever for ecosystem development. By commissioning safety evaluations and red-teaming from European firms, and by publishing open evaluation frameworks that European developers can implement, these institutes create demand for domestic AI safety expertise while helping European companies meet emerging standards.

We recommend that such procurement strategies be context-aware rather than blanket mandates. It should be noted, for instance, that closed models will likely remain superior to open-weight alternatives for many applications. Governments often need the best available technology, not the best open technology. Consequently, mandating open-source solutions where they are materially inferior would compromise service delivery and could discredit the broader sovereignty agenda. The goal should be maintaining optionality and avoiding lock-in to any single provider, rather than prescribing openness as a blanket requirement.

That said, certain domains warrant targeted approaches where open-source AI offers clear sovereignty benefits and where European institutions can develop independent evaluation capacity:

Critical infrastructure and cybersecurity: AI-powered defence operating under European control, paired with investments in resilience against AI-enabled threats. ENISA reports that over 80% of phishing campaigns targeting the EU now use AI-generated content [38], while malicious AI tools automate social engineering and malware development at scale. Defensive AI must operate at equivalent speed—but European institutions currently lack sovereign options. Open-weight models deployed on European infrastructure could enable real-time threat analysis and automated incident response without routing sensitive security data through foreign cloud providers.

Public sector applications: Healthcare diagnostics, administrative automation, and citizen services where data sovereignty concerns are acute and where European institutions can serve as sophisticated deployers capable of evaluating safety claims.

SME adoption: Enterprise AI adoption in Europe stands at 55%, but SME adoption lags at just 17%.[34] Lightweight open-weight models suited to tasks not requiring frontier performance offer lower inference costs accessible to smaller firms. Targeted procurement and support programmes for SME AI adoption could drive immediate diffusion while longer-term investments target frontier capability development.

Research and education: Open-weight models enable academic study of AI systems that closed alternatives preclude. Supporting researcher access, potentially through verified-access programmes for advanced models, serves both scientific and governance objectives.

Targeted procurement criteria might include: requirements for European maintenance of open-source components; exit options from proprietary systems; and preferences for solutions with demonstrated safety evaluations over those with equivalent capabilities but no published assessments.

We note that domain-specific data may represent a more governable chokepoint than model distribution. In high-risk domains like biosecurity or critical infrastructure, the specialised datasets required to fine-tune models for dangerous applications are often harder to obtain than the models themselves.[9] Governance strategies might therefore focus not only on model release but on protecting the data that enables harmful adaptation.

Societal Preparedness

Even with robust safeguards, some misuse risk is irreducible. Societal preparedness must therefore advance in parallel.

Cybersecurity illustrates the stakes. AI-powered defensive capabilities can operate at machine speed, addressing attacks that outpace human response [8] via automated anomaly detection, rapid vulnerability patching, and threat intelligence synthesis.

But the same capabilities that enable defence can enable attack. Anthropic recently uncovered a large espionage campaign in which attackers used AI agents to infiltrate major global targets.[6] Purpose-built “malicious LLMs” and jailbroken general-purpose models already assist cybercrime operations.[7]

This domain exemplifies our core argument: we need open source for resilience, but we also need resilience for open source.

The path forward is not to abandon openness but to pair it with investments that tip the balance toward defence: hardening techniques that survive adversarial modification, incident monitoring infrastructure, and cross-border intelligence sharing on AI-enabled threats.

Governments can actively incentivise adoption of AI-powered cyber defence through concrete mechanisms: procurement frameworks requiring AI-enhanced security tools for contractors handling sensitive data; co-funding programmes for SME adoption of defensive AI capabilities; and mandatory AI security assessments for critical infrastructure operators, paired with subsidised access to approved tools.

More broadly, the AI Act’s AI literacy mandate provides a foundation, but implementation should extend beyond surface compliance. For critical infrastructure operators, this means structured training for operational staff, IT security teams, and management on AI-specific threat vectors and defensive capabilities. [10]

And the need extends well beyond critical infrastructure. Mid-sized businesses often lack both the resources and expertise to adopt AI-powered defences, making them attractive targets for automated ransomware campaigns at scale. Targeted support programmes for example through subsidised training, or simplified access to enterprise- grade defensive tools, could significantly raise the floor of societal resilience.

National and international cybersecurity agencies, as well as critical infrastructure agencies should establish AI-specific incident monitoring capabilities, tracking patterns like AI-generated exploit code and model-assisted vulnerability scanning. Structured reporting infrastructure must be built to capture and classify such incidents systematically. The OECD’s common reporting framework for AI incidents offers a template for interoperability between national systems.[11]

Conclusion

Realising the sovereignty benefits of open-source AI requires parallel investment in safety and security infrastructure. The window for establishing governance frameworks that enable safe openness -as well as societal resilience- is narrowing as capabilities advance. Without substantial progress, each new open-weight model release represents an uncontrolled experiment on the public.

Openness and safety are not mutually exclusive. A tiered approach, standards development through AI Safety and Security Institutes, technical safeguards investment, and targeted procurement criteria can together create conditions where open-source AI delivers on its promise without exposing Europe to unmanaged risks. The goal is to move beyond the binary of open versus closed, toward a framework where openness is earned through demonstrated safety.

Sources

[1] Epoch AI, “Chinese AI models have lagged the US frontier by 7 months on average since 2023,” January 2026. https://epoch.ai/data-insights/us-vs-china-eci

[2] Centre for Future Generations, “Beyond the binary: A nuanced path for open-weight advanced AI,” July 2025. https://cfg.eu/beyond-the-binary/

[3] Centre for Future Generations, “Can open-weight models ever be safe?,” January 2026. https://cfg.eu/can-open-weight-models-ever-be-safe/

[4] Emberson, L., “Open-weight models lag state-of-the-art by around 3 months on average,” Epoch AI, October 2025. https://epoch.ai/data-insights/open-weights-vs-closed-weights-models

[5] Epoch AI, “Models with downloadable weights currently lag behind the top-performing models,” February 2025. https://epoch.ai/data-insights/open-vs-closed-model-performance

[6]Anthropic, “Disrupting the first reported AI-orchestrated cyber espionage campaign”, https://assets.anthropic.com/m/ec212e6566a0d47/original/Disrupting-the-first-reported-AI-orchestrated-cyber-espionage-campaign.pdf

[7] Europol, “ChatGPT: The Impact of Large Language Models on Law Enforcement,” 2023

[8] Centre for Future Generations, “Preparing for AI Futures: Policy Options for Europe” 2026

[9] Kembery, E., “Towards Responsible Governing AI Proliferation,” arXiv:2412.13821, December 2024.

[10] Regulation (EU) 2024/1689 (AI Act), Article 4.

[11] OECD, “AI Incidents Monitor framework,” 2025

[12] Emberson, L., “Open-weight models lag state-of-the-art by around 3 months on average,” Epoch AI, October 2025. https://epoch.ai/data-insights/open-weights-vs-closed-weights-models

[13] The Decoder, “China captured the global lead in open-weight AI development during 2025, Stanford analysis shows,” January 2026. https://the-decoder.com/china-captured-the-global-lead-in-open-weight-ai-development-during-2025-stanford-analysis-shows/

[14] Epoch AI, “Tracking AI infrastructure by country,” 2025. https://epoch.ai/data

[15] Benaich, N. and Hogarth, I., State of AI Report 2025. https://www.stateof.ai/

[16] EuroHPC JU, “The EuroHPC JU Selects Six Additional AI Factories to Expand Europe’s AI Capabilities,” October 2025. https://www.eurohpc-ju.europa.eu/

[17] EuroHPC JU, “JUPITER Officially Propels Europe into the Exascale Era,” November 2025. https://www.eurohpc-ju.europa.eu/

[18] Tilde Unveils European Multilingual AI Model With Safeguards Against Disinformation https://slator.com/tilde-unveils-european-multilingual-ai-model-with-safeguards-against-disinformation/

[19] InfoQ, “OpenEuroLLM: Europe’s New Initiative for Open-Source AI Development,” February 2025. https://www.infoq.com/news/2025/02/open-euro-llm/

[20] Blind, K., Böhm, M., Grzegorzewska, P., Katz, A., Muto, S., Pätsch, S., and Schubert, T., “The impact of Open Source Software and Hardware on technological independence, competitiveness and innovation in the EU economy,” European Commission, September 2021. https://digital-strategy.ec.europa.eu/en/library/study-about-impact-open-source-software-and-hardware-technological-independence-competitiveness-and

[21] Blind, K. and Schubert, T., “Estimating the GDP effect of Open Source Software and its complementarities with R&D and patents: evidence and policy implications,” The Journal of Technology Transfer, vol. 49, pp. 466-491, 2024. https://doi.org/10.1007/s10961-023-09993-x

[22] GitHub, “Octoverse 2024: AI leads Python to top language as the number of global developers surges,” October 2024. https://github.blog/news-insights/octoverse/octoverse-2024/

[24] Bengio, Y. et al., “International AI Safety Report 2025,” January 2025. https://internationalaisafetyreport.org/

[25] Qi, X. et al., “Fine-tuning Aligned Language Models Compromises Safety, Even When Users Do Not Intend To!” arXiv:2310.03693, 2023; Lermen, S. et al., “LoRA Fine-tuning Efficiently Undoes Safety Training in Llama 2-Chat 70B,” arXiv:2310.20624, 2023.

[26]Reuters, “Exclusive: Chinese researchers develop AI model for military use on back of Meta’s Llama,” November 2024, https://www.reuters.com/technology/artificial-intelligence/chinese-researchers-develop-ai-model-military-use-back-metas-llama-2024-11-01/

[27] Microsoft, “Open Source Software Supply Chain Threats”, https://www.microsoft.com/en-us/securityengineering/opensource/ossthreats#:~:text=Open%20Source%20Software%20Supply%20Chain,organization’s%20software%20and%20development%20environment.

[28] UK AI Security Institute, “Safety Cases: A Scalable Approach to Frontier AI Safety,” 2025. See also: UK AISI, “Safety case template for ‘inability’ arguments,” May 2025. https://www.gov.uk/government/organisations/ai-security-institute

[29] Organisation for the Prohibition of Chemical Weapons, Scientific Advisory Board. https://www.opcw.org/about/subsidiary-bodies/scientific-advisory-board

[30]National Center for Biotechnology Information, “Database of Genotypes and Phenotypes (dbGaP).” https://www.ncbi.nlm.nih.gov/gap/; UK Biobank, “Access procedures for researchers.” https://www.ukbiobank.ac.uk/

[31] Protect AI, “Guardian: Enterprise AI Security Platform,” 2025. https://protectai.com/guardian

[32] European Commission, “Public Procurement,” 2025. https://single-market-economy.ec.europa.eu/single-market/public-procurement_en

[33] Hackett, W., “Digital sovereignty requires funding, not just adoption,” January 2026. https://www.willhackett.uk/eu-open-source-sovereignty/

[34] Use of artificial intelligence in enterprises, Eurostat, https://ec.europa.eu/eurostat/statistics-explained/SEPDF/cache/106920.pdf

[35] Center for Future Generations, “Europe and the geopolitics of AGI”, https://cfg.eu/the-geopolitics-of-agi/

[36] Center for Future Generations, “Frontier AI Initiative”, https://cfg.eu/frontier-ai-initiative/

[37] Anthropic, “Disrupting the first reported AI-orchestrated cyber espionage campaign”, https://assets.anthropic.com/m/ec212e6566a0d47/original/Disrupting-the-first-reported-AI-orchestrated-cyber-espionage-campaign.pdf

[38] European Union Agency for Cybersecurity, “ENISA Threat Landscape 2025”, https://www.enisa.europa.eu/sites/default/files/2025-11/ENISA%20Threat%20Landscape%202025.pdf