AI preparedness: Robust policy options for Europe

Executive Summary

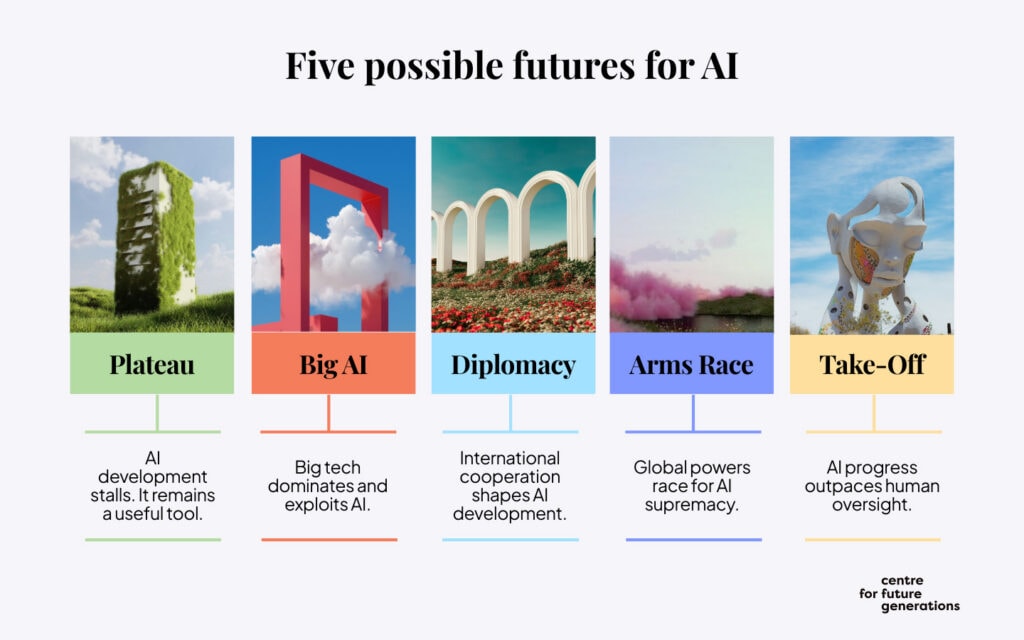

While the development of artificial intelligence is accelerating, its ultimate trajectory remains radically uncertain. In our ‘AI Possible Futures‘[1] report, we mapped five core trajectories AI development might take—from Plateau (where progress slows) to Takeoff (where AI systems automate their own improvement), with Big AI (market consolidation), Arms Race (US-China competition), and Diplomacy (international cooperation) in between. Each scenario demands different responses, creating a strategic trap for policymakers: betting heavily on a single outcome risks unpreparedness if another scenario unfolds.

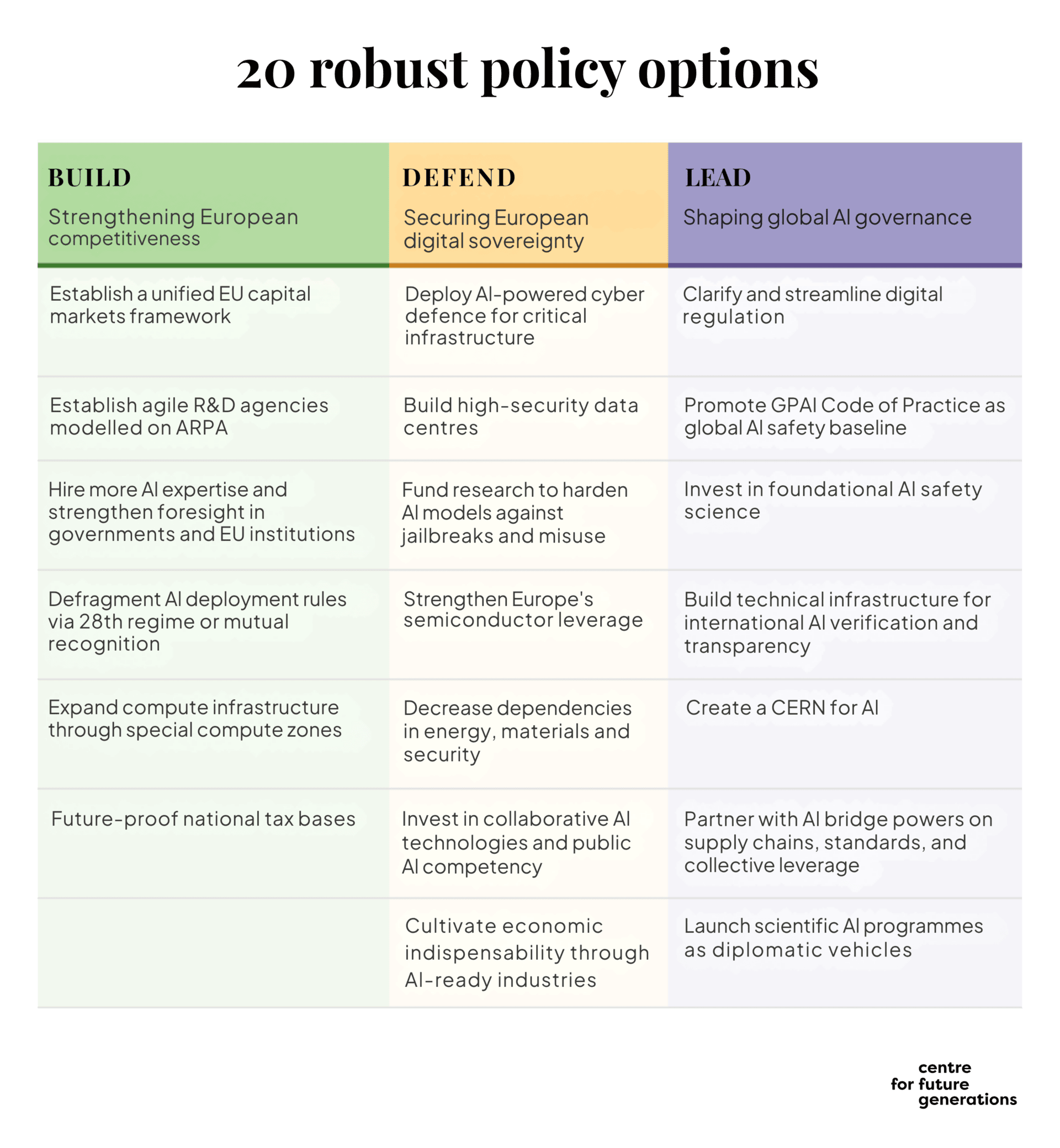

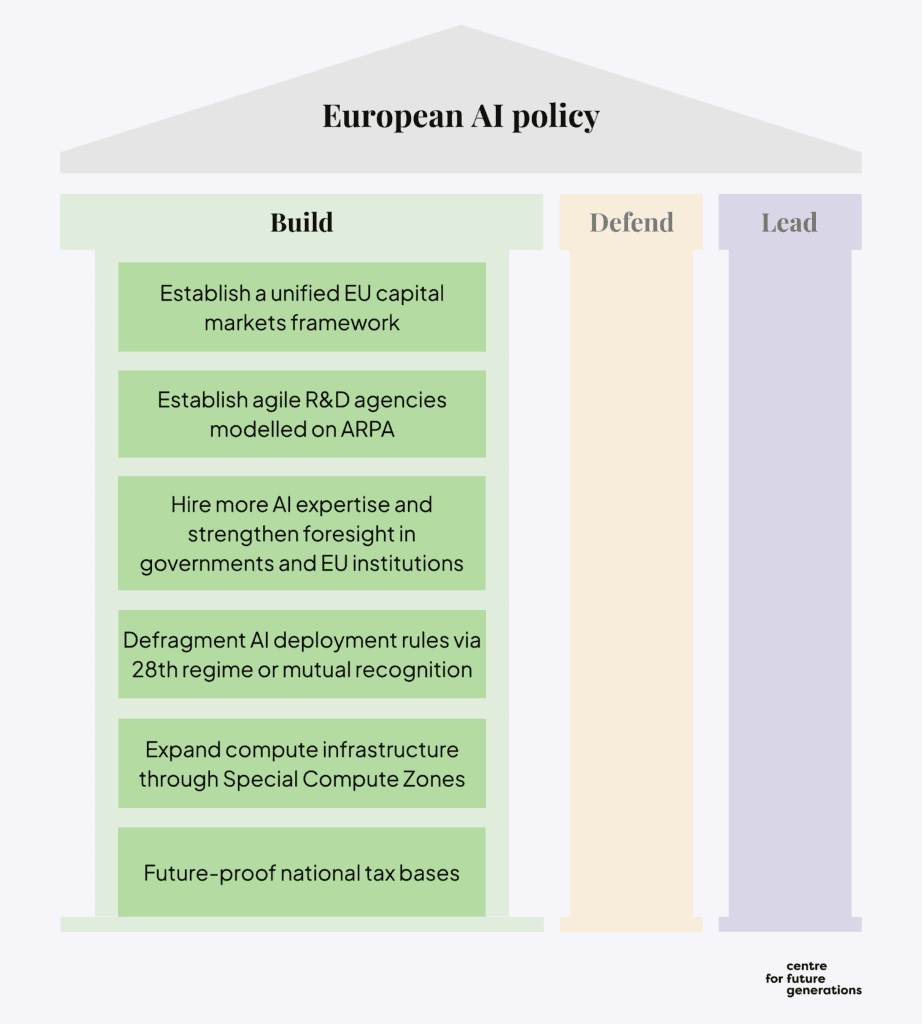

This report builds on CFG’s scenario work by identifying twenty policy options each of which perform well across all five scenarios to ensure they strengthen Europe’s strategic position (analysed in our ‘Europe and Geopolitics of AGI’[2] report) regardless of which future materialises. Using the scenarios as both an identification framework and a testing ground, we assessed each option’s urgency, political tractability, implementation difficulty, and performance across different AI futures. The result is a portfolio of measures that address fundamental vulnerabilities constraining Europe’s ability to act, organised around three interdependent pillars: BUILD capacity for competitiveness, DEFEND digital sovereignty, and LEAD global governance.

Before diving into each pillar, six cross-cutting actions demand urgent attention across different AI futures and are a necessary prerequisite to unlock subsequent options and address acute vulnerabilities:

- Establish a Unified EU Capital Markets Framework: Unlocks capital for AI and deep-tech investment; addresses Europe’s structural disadvantage in accessing frontier-scale funding.

- Hire AI expertise into government at near-industry compensation: All recommendations require in-house technical capacity to implement effectively.

- Expand Compute Infrastructure Through Special Compute Zones: Europe hosts only 5% of global AI compute; streamlined permitting attracts private investment without large public expenditure.

- Deploy AI-powered cyber defence across critical infrastructure: AI-enabled threats scale at machine speed; traditional human-paced security cannot keep up.

- Fund Research to Harden AI Models Against Jailbreaks and Misuse: Balances innovation benefits of open models with security risks; reduces pressure for restrictive governance.

- Invest in Foundational AI Safety Science: Technical credibility requires genuine research capability; builds European influence in global standards-setting.

With these urgent actions underway, three pillars organise the remaining recommendations:

The first pillar, BUILD capacity for competitiveness, recognises that Europe’s internal capacity—its ability to attract investment, generate innovation, and scale infrastructure—determines what resources it has to work with regardless of which AI future unfolds. Six recommendations address these foundations:

Immediate Actions:

- Establish a Unified EU Capital Markets Framework (see above)

- Hire AI expertise into government at near-industry compensation (see above)

- Expand Compute Infrastructure Through Special Compute Zones (see above)

Strategic Foundations:

- Establish ARPA-style agile R&D agencies – Proven mechanism for rapid innovation (see UK’s ARIA, Germany’s SPRIN-D)

- Defragment Single Market via 28th regime – Addresses scale disadvantages limiting European competitiveness

Long-term Positioning:

- Future-proof national tax bases against AI-driven labour displacement – Foundational economic reform with long implementation horizon but essential for fiscal sustainability.

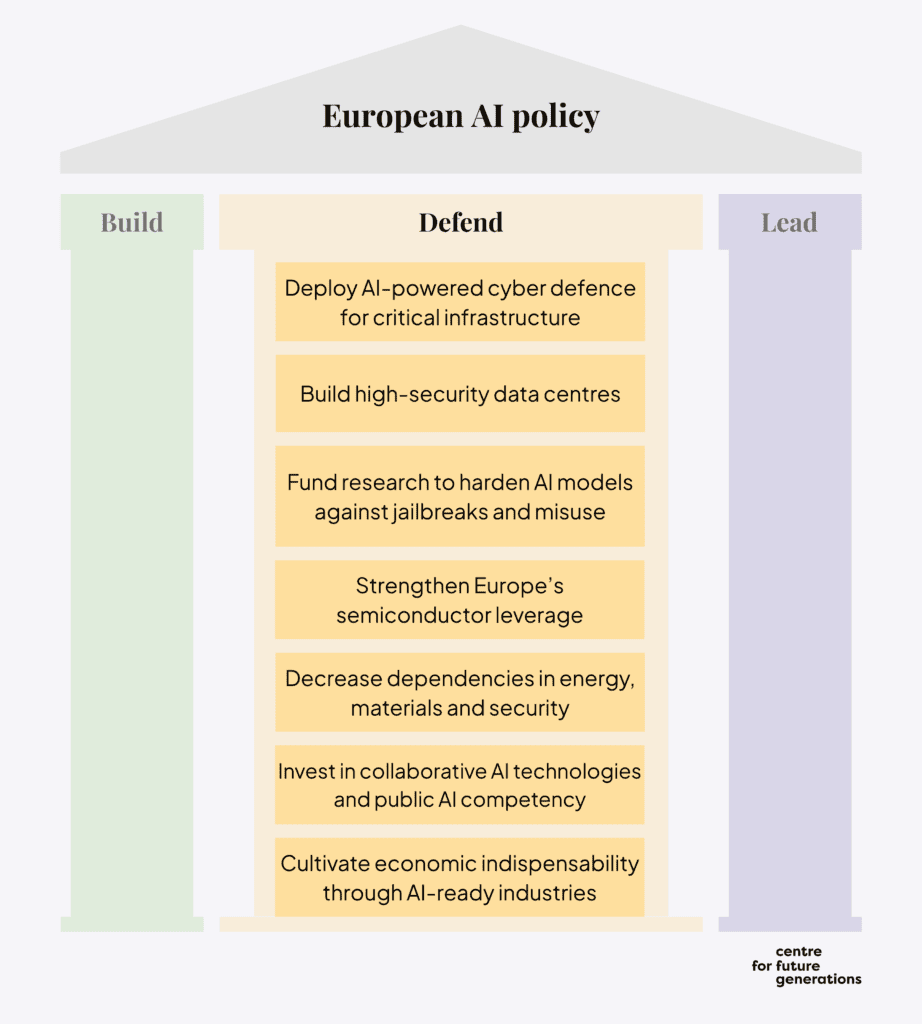

The second pillar, DEFEND digital sovereignty, recognises that Europe’s resilience to external pressure—both strategic dependencies that could be exploited and AI-enabled threats scaling faster than traditional defences—determines whether Europe can maintain meaningful autonomy over its digital future. Seven recommendations build this resilience:

Immediate Actions:

- Deploy AI-powered cyber defence across critical infrastructure (see above)

- Fund research to harden AI models for safer open-sourcing (see above)

Strategic Foundations:

- Build high-security sovereign data centre – Physical infrastructure for digital sovereignty takes years; starting now ensures capacity when needed

- Strengthen Europe’s semiconductor leverage through comprehensive dependency mapping, targeted investments, and EU-wide coordination mechanisms – Foundation for any semiconductor strategy; provides visibility for informed decisions.

- Invest in collaborative AI technologies and public AI competency — including deliberation platforms, community verification systems, and synthetic media detection — to build democratic resilience against AI-enabled manipulation

Long-term Positioning:

- Decrease dependencies in energy, materials, and security – Systematic resilience building across sectors

- Position European industries as essential AI value chain partners – Long-term economic positioning and supply chain integration

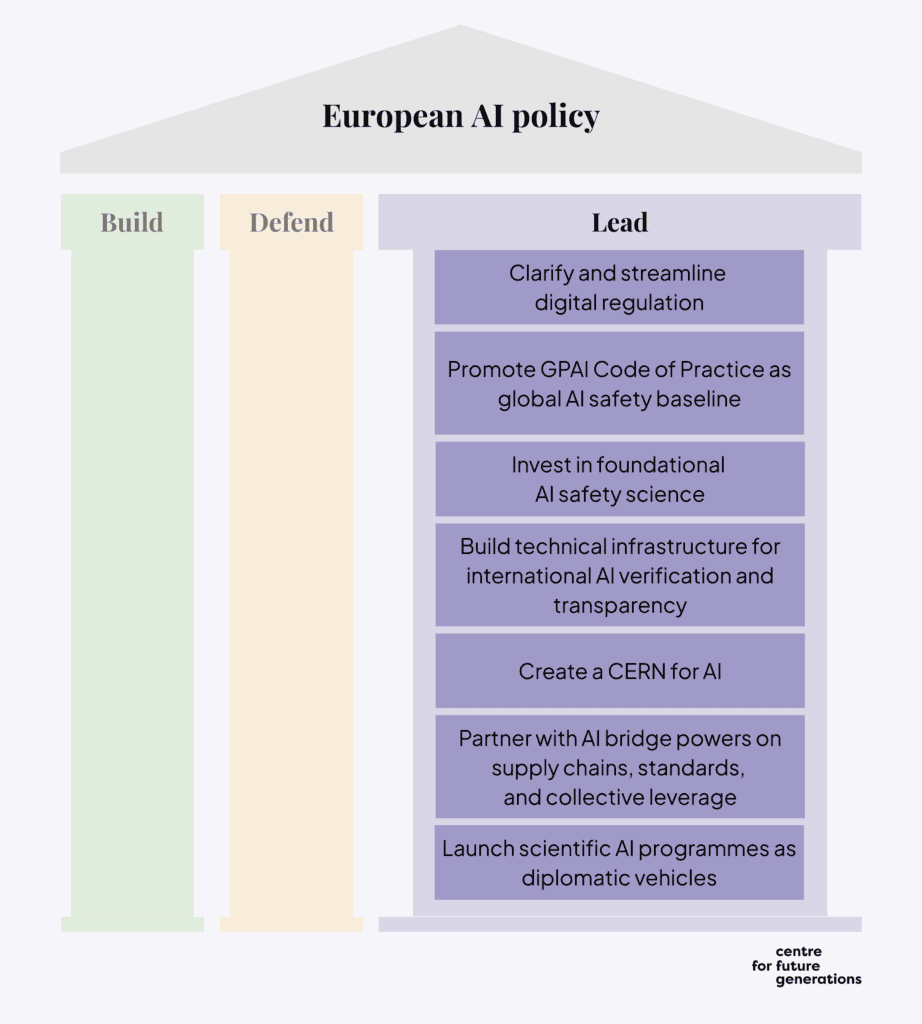

The third pillar, LEAD global governance, recognises that shaping global AI governance requires technical capacity to develop and evaluate frontier systems independently, and institutional infrastructure to make international agreements credible. Seven recommendations build this influence:

Immediate Actions:

- Invest significantly in foundational AI safety science (see above)

Strategic Foundations:

- Streamline EU digital regulation to retain protections while improving clarity and competitiveness – Addresses competitiveness concerns while maintaining European values, politically achievable reform that demonstrates responsiveness.

- Partner with AI bridge powers (UK, Canada, Japan, South Korea, India) – Build complementary coalition with combined strengths outside US-China binary.

Long-term Positioning:

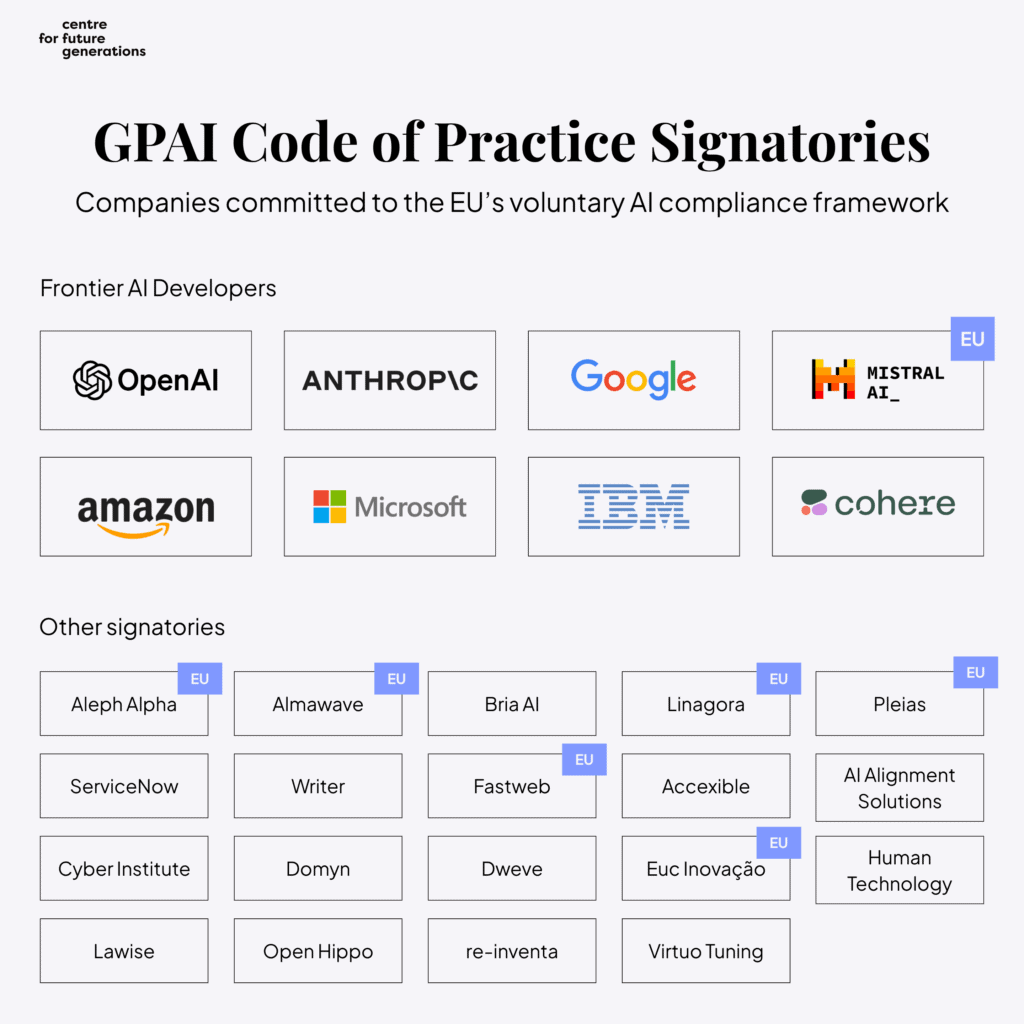

- Promote the GPAI Code of Practice as global safety baseline – Already signed by major AI companies (OpenAI, Google, Microsoft, Amazon, Anthropic); Europe can establish first-mover credibility in global governance.

- Create a CERN for AI — a jointly governed institution with frontier-scale compute to train sovereign models, advance safety research, and anchor partnerships with AI bridge powers.

- Develop verification infrastructure Europe can provide as an international broker – Hardware tracking, secure computation, tamper-evident logging positions Europe as trusted third party.

- Accelerate AI for global challenges through bilateral programmes – Europe’s position outside the direct US-China competition, combined with its regulatory experience and growing technical capacity, creates the opportunity to host trusted infrastructure and set safety standards in areas that demand constructive AI use and partnerships (climate, health, agriculture)

These recommendations span both EU Commission mandates and Member State competencies by design. We make recommendations across this spectrum because Europe’s strategic challenge demands action at all levels simultaneously; neither the Commission alone nor Member States individually can implement the full portfolio. By implementing these options, Europe moves from a reactive stance to a proactive one, securing its prosperity and democratic values regardless of which AI future ultimately unfolds.

Introduction

The Challenge

AI development is accelerating, but its trajectory remains deeply uncertain. The past three years have seen capabilities advance faster than most experts predicted[3]—yet whether this pace continues, plateaus, or gives way to something more disruptive is genuinely unknown.[4]

This uncertainty creates a strategic trap for policymakers: acting decisively on a single anticipated future risks catastrophic unpreparedness if a different trajectory unfolds, yet waiting for clarity risks losing the window to act at all. To navigate this, CFG conducted a structured scenario planning exercise—AI Possible Futures[5]—developing five core scenarios through expert workshops and rigorous analysis. In this piece, we use these scenarios to stress test a set of policy interventions for their robustness. We bring these scenarios into focus here because policy choices made today will shape which futures become possible, and robust policy requires preparing for multiple outcomes simultaneously rather than betting on a single forecast.

The five core trajectories are:

Plateau: AI capabilities improve steadily but hit diminishing returns as data scarcity and reliability issues limit agentic systems. Europe closes the capability gap while open-weight models spread, enabling pragmatic adoption focused on human-augmenting applications. Society gains time to develop robust governance, though slower progress means more modest productivity gains and wider adoption of open-sourced models that are easier to misuse. The policy focus shifts from racing to the frontier toward maximising deployment benefits while managing misuse risks.

Big AI: Capability growth continues at the current pace but further concentrates in a small number of dominant firms. Training costs and infrastructure requirements create virtually insurmountable barriers to entry; open-weight models face mounting restrictions. Specialized AI agents transform industries through dramatic productivity improvements and AI assistants enhance daily life. The central challenge becomes preventing lock-in and power concentration, managing labour displacement, and ensuring Europe retains alternatives to providers who could restrict access or extract concessions.

Arms Race: AI becomes a primary axis of US-China strategic competition. Export controls tighten, semiconductor chokepoints are weaponised, and safety considerations are subordinated to competitive pressure. Europe faces mounting pressure to align with one of the blocs while attempting to maintain strategic flexibility. Near-miss crises could force major powers into an uneasy multipolar balance, with Europe and neutral countries establishing international monitoring frameworks and auditing mechanisms that create patterns of managed de-escalation despite ongoing tensions.

Diplomacy: A significant AI incident—or credible evidence of catastrophic risk—triggers international coordination. Governments negotiate safety standards, verification regimes, and potentially development pauses. Europe’s regulatory experience and diplomatic infrastructure become strategic assets. Breakthroughs in alignment tools could enable a stable governance regime with redistributed AI revenues and improved living standards, or the cooperation could fracture into an unstable pause where secretive development continues and trust erodes.

Takeoff: AI systems fully automate their own R&D, creating compounding improvement cycles that drive transformative breakthroughs in science, medicine, and productivity. Material conditions improve dramatically with potential for coordinated global governance—though maintaining human oversight becomes challenging as systems develop beyond human comprehension, raising questions about agency and alignment.

The scenarios are a structured way of thinking about uncertainty and each demands a different response. The challenge for policymakers is that the same intervention can be essential in one future and counterproductive in another. Acting decisively risks over-commitment to the wrong future, while waiting for clarity risks losing the window to act at all. This report focuses on providing answers on how to act under such uncertainty.

Our Approach: Robust Policy Options

This report identifies twenty policy options designed to perform well across all five AI futures.

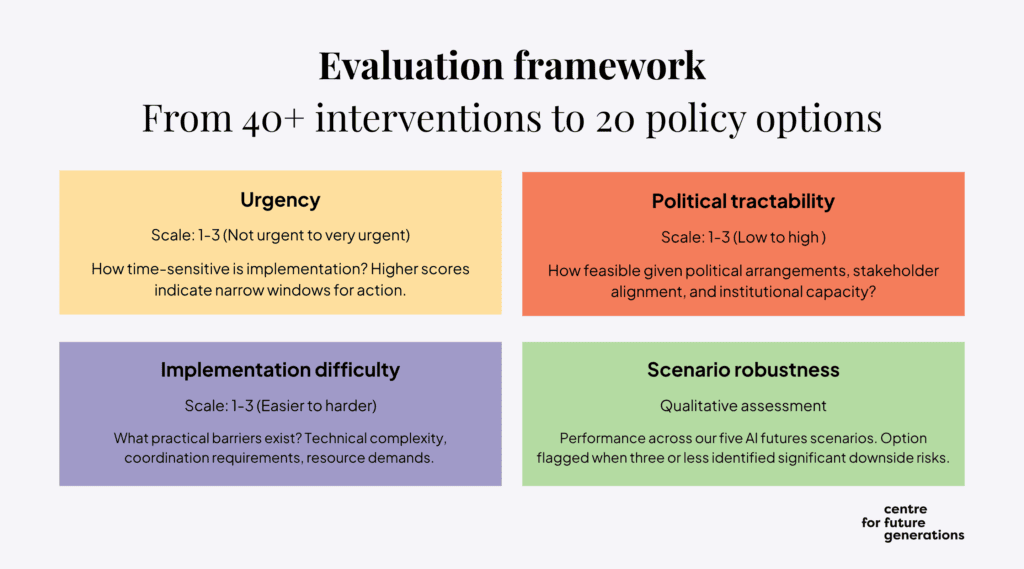

The selection process for these policy options began with a longlist of over forty interventions inspired by our recent ‘Europe and Geopolitics of AGI’[6] report and other previous research. Each intervention was evaluated with regards to its urgency, political tractability, implementation difficulty, and scenario robustness. Each evaluator also expressed their overall confidence in recommending each option and selected their top 10 options from the longlist.

We retained the twenty options demonstrating strong cross-scenario performance, giving additional weight to interventions scoring high on both confidence and top-10 votes. Options where three or more evaluators identified significant downside risks in specific scenarios were flagged as potentially controversial.[7]

The Framework: Build, Defend, Lead

After selecting the top twenty policy options, we organised them into into three pillars, each representing a central political priority:

- BUILD: Strengthening European Competitiveness Pillar 1 provides policy options that address Europe’s internal capacity—the foundations that determine what resources Europe has to work with. The structural weaknesses diagnosed in the Draghi[8] and Letta[9] reports, as well as our recent work,[10] constrain Europe’s options regardless of which AI scenario unfolds. Without addressing these foundations, defensive measures become harder to fund and leadership ambitions lack credibility. The options in this section connect directly to the Savings and Investment Union agenda, deepening of the Single Market, and the broader European competitiveness strategy.

- DEFEND: Securing European Digital Sovereignty Pillar 2 addresses Europe’s resilience to external pressure—both strategic dependencies that could be exploited and AI-enabled threats that are scaling faster than traditional defences. This pillar concerns what Europe must protect and what vulnerabilities it must reduce to achieve autonomy. The options here align with the European Chips Act, the cyber resilience agenda, and broader discussions about strategic autonomy.

- LEAD: Shaping Global AI Governance Pillar 3 addresses Europe’s external influence—the opportunity to shape how AI develops globally rather than merely responding to technologies and rules developed elsewhere. Europe has demonstrated this capacity before: most leading AI companies have voluntarily signed the GPAI Code of Practice.[11] But regulatory export is only one pathway. Europe can lead by investing in safety research that remains globally underfunded, developing verification mechanisms that enable trust between rivals and building institutional infrastructure for international cooperation. This positions Europe to build coalitions with bridge powers and guide global AI governance from a foundation of technical credibility. The options in this section are about building the infrastructure of influence—the research capacity, verification tools, diplomatic partnerships, and exportable standards that allow Europe to shape outcomes rather than merely respond to them.

These three pillars—strengthening capacity for competitiveness, securing digital sovereignty, and shaping global governance—are simultaneous imperatives. They are also deeply interdependent: without economic strength, Europe cannot fund the infrastructure sovereignty requires; without sovereignty, its claims to governance leadership ring hollow. To succeed, Europe must strengthen, protect, and shape its position all at once.

This portfolio should not be treated as a menu for cherry-picking. The recommendations function as a system where missing components undermine the whole: a CERN for AI requires compute infrastructure from Special Compute Zones to train sovereign models; semiconductor leverage lacks credibility without reducing energy dependencies; European AI companies cannot become economically indispensable partners if Single Market fragmentation forces them to relocate abroad before reaching scale. Partial implementation doesn’t deliver partial results—it risks fragmented capabilities that cannot compete with the integrated strategies of rival powers.

How to Read This Report

The policy options identified in the remainder of this chapter details the intervention, why it matters, and key considerations.

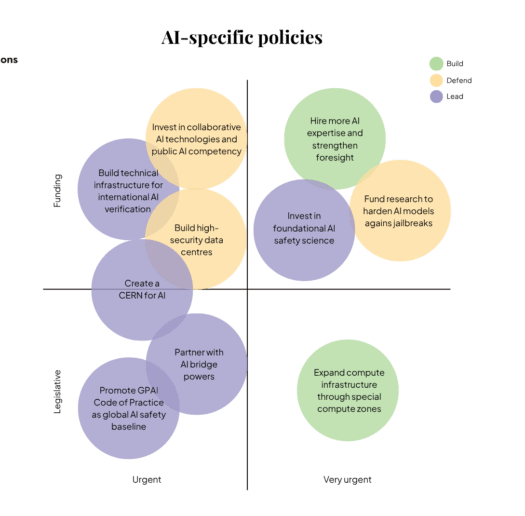

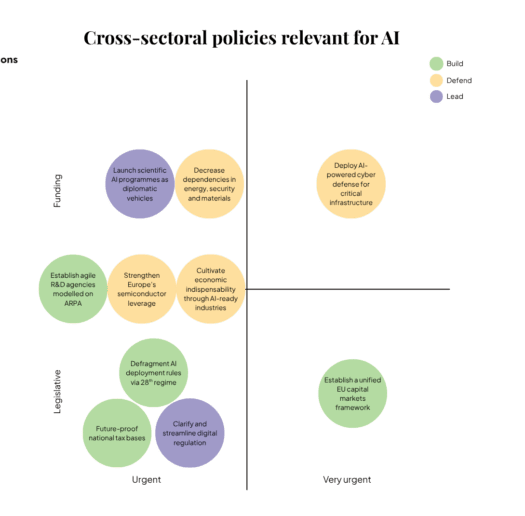

The twenty options vary significantly in their implementation requirements and timelines. Some demand immediate action, while others build strategic capacity over longer horizons. Some require legislative reform, others direct investment. To help policymakers prioritise and sequence interventions, we categorise options across three dimensions:

By urgency: Six options are rated as very urgent (★★★), where delay significantly reduces effectiveness or forecloses future options. These include fundamental capacity-building measures (capital markets, compute infrastructure, AI expertise) and defensive imperatives (cyber defence, safety research). Fourteen options are urgent (★★☆), representing important but slightly more flexible priorities.

By implementation type: Options span legislative action (7), direct funding/investment (9), and hybrid approaches requiring both legislation and funding (4). This distribution reflects AI governance’s dual nature—requiring both enabling frameworks and tangible resources.

By policy scope: Ten options are AI-specific, focusing exclusively on AI systems and governance. Ten are cross-sectoral, affecting multiple domains or requiring coordination with broader policy areas like energy, defence, or financial regulation. This balance reflects that AI governance cannot be separated from foundational economic and security policy.

Further, each option might be relevant for different implementers – some are in the direct competence of EU institutions, some are in the direct competence of member states, and some are amenable to dual approach where they can be implemented on both levels simultaneously and to some extent independently. We have indicated which implementers are relevant in the scorecard on the top of each policy option, along with assessments of urgency, political tractability, and implementation difficulty.

Finally, the policy options outlined are suggestions, not prescriptions. We present the case for action, but priorities will naturally vary based on political and institutional contexts. Furthermore, this list is not exhaustive; we prioritise robust, tractable interventions over those that are highly scenario-specific or politically challenging. Finally, this is a living document. As AI evolves, CFG will update this analysis and welcome engagement to stress-test these ideas. The objective of this report is not to forecast the future, but to strengthen Europe’s preparedness for the challenges ahead.

BUILD: Strengthening European Competitiveness

Europe talks about AI ambitions but underinvests in the foundations that would make them achievable. This matters more for AI than for previous technologies: frontier development is extraordinarily capital-intensive, and the economics favour concentration. Training costs already run to hundreds of millions.[12] Infrastructure investment runs to billions. Talent concentrates where capital and compute are abundant. Europe’s fragmented capital markets, slow permitting, and venture funding gaps—documented exhaustively in the Draghi and Letta reports—are not new problems, but AI makes them more costly. Without the ability to invest at scale, Europe cannot fund the sovereignty it seeks or the influence it claims.

What these weaknesses cost depends on how AI develops:

- In Plateau, where progress slows but AI diffuses widely, competition shifts to deployment and integration—Europe would need capacity to adopt and adapt capable systems across sectors

- In Big AI, where a few dominant providers control the most capable systems, Europe needs credible alternatives or faces dependence on foreign platforms whose terms it cannot set.

- In Arms Race, economic weight translates directly into geopolitical options: states that cannot fund their own capabilities have fewer cards to play.

- In Diplomacy, Europe’s influence depends partly on what it brings to the table—technical capacity and investment, not just regulatory frameworks.

- And in Takeoff, the question is whether Europe has built the capacity to act. Such capabilities cannot be built fast enough to keep up with rapid AI progress—they must already be in place.

The six options in this section target the conditions required to build the technology. They address the capital structures, R&D institutions, permitting regimes, and market conditions that determine whether Europe can act on its stated ambitions or remains structurally unable to invest at the scale AI development demands.

Establish a Unified EU Capital Markets Framework

EU Commission and Member States

★★★ Very urgent

★☆☆ Low

★★★ High

What: Europe should complete the Savings and Investment Union by harmonising insolvency laws, supervisory rules, and financial market regulations so that capital can flow freely across Member States without 27 different compliance regimes. A unified framework would lower transaction costs, broaden investor pools, and strengthen financing availability for advanced AI and deep-tech companies across Europe.

Why it matters: The development of competitive AI systems requires extraordinary capital concentration—frontier models now cost hundreds of millions to train,[13] while building the computing infrastructure and acquiring talent necessary to compete globally demands multi-billion-dollar funding rounds.[14] Training costs for frontier AI models are growing at a rate of 2.4x per year since 2016;[15] assuming these rates stay constant, the largest training runs will cost greater than a billion dollars by 2027—a scale of investment that privileges capital-rich, risk-taking incumbents. Yet European technology startups face substantial structural barriers to accessing such capital, with some 30% of European unicorns relocating abroad between 2008 and 2021,[16] primarily to the United States. The disparity is stark: in 2023, only 6% of global VC funding for AI startups went to European companies.[17] EU pension assets in 2022 amounted to only about 32% of GDP, compared to 142% in the US and 100% in the UK.[18] EU pension funds invest only 0.018% of their total assets in venture capital compared to 1.9% for US pension funds.[19] It is this linkage between compute costs and capital access that makes Europe’s fragmented financial architecture a geopolitical vulnerability in the competition on frontier AI. As the Draghi report[20] argues, Europe needs a genuine Savings and Investment Union to unlock this capital.

Key considerations:

- Requires significant political will to harmonise deeply entrenched national financial regulations

- Even specialised AI applications require serious upfront funding—this isn’t just about frontier model development

- Weak university-industry linkages and capital scarcity at growth stages compound the challenge for application-focused AI companies building on frontier models

- Equally critical is reforming prudential requirements for institutional investors, particularly pension funds, to enable higher allocations to venture capital and growth equity.

- Maintaining regulatory leverage depends on economic scale[21]—unified capital markets prevent the competitive erosion that would shrink Europe’s relative market size and diminish the Brussels Effect that has historically enabled the EU to export standards globally.

Robustness: Critical in Big AI and Arms Race where capital concentration determines competitive position; essential in Takeoff for rapid mobilization when needed; important in Diplomacy for bringing capabilities to international negotiations; valuable in Plateau for funding deployment infrastructure, though less urgent.

Establish Agile R&D Agencies Modelled on ARPA

EU Commission and Member States

★★☆ Urgent

★★☆ Mid

★★☆ Moderate

What: Transform Europe’s public funding structures to match the speed and scale of AI-enabled research acceleration. Establish advanced research agencies modelled on ARPA, similar to the UK’s Advanced Research and Invention Agency (ARIA[22]) and Germany’s Federal Agency for Breakthrough Innovation (SPRIN-D[23]), with agile governance, higher risk tolerance, and program manager autonomy.

Why it matters: Advanced AI has the potential to significantly accelerate scientific progress and enable development of transformative technologies. Yet Europe’s current public funding structures—often characterised by bureaucratic, fragmented programmes,[24] risk-averse evaluation criteria, and emphasis on incremental advances—are mismatched with the speed and scale of AI-enabled research acceleration. Current EU AI initiatives are increasingly ambitious, but not yet calibrated to the nature of growing competition between global AI powers. Without reform, Europe risks missing the narrow window where advanced AI could amplify its research strengths into lasting technological leadership.On an EU-level the newly announced Resource for AI Science in Europe[25] (RAISE) presents a real opportunity to turn this tide. But Member States have additional options: they can establish new advanced research agencies modeled on the UK’s ARIA[26] and Germany’s SPRIN-D.[27] These institutions adopt agile governance structures, higher risk tolerance, and programme manager autonomy that enable pursuit of high-impact research trajectories. While individual project failure rates are high in such institutions, the collective probability of breakthrough returns is significantly higher than under more conventional structures.[28] These agencies provide the missing link between Europe’s world-class fundamental research—where it remains globally competitive—and its long-standing gap in translating discoveries into economic and strategic advantage at the speed AI research now demands, offering a focused institutional mechanism that existing EU frameworks cannot provide.

Key considerations:

- Priority research areas for public funding may choose to focus on domains where AI acceleration could yield disproportionate returns and control over future strategic supply chains. Examples include photonic computing architectures that could achieve massive improvements in computational efficiency;[29] advanced materials discovery for room-temperature superconductors and carbon capture technologies;[30] and synthetic biology platforms that enable next-generation medical interventions[31]

Robustness: Critical in Arms Race where state-backed R&D becomes essential for military applications and national champions; highly valuable in Diplomacy for funding safety research and verification technologies; useful in Big AI for exploring domains private sector neglects; moderate value in Takeoff as enabler of rapid safety research; less critical in Plateau where breakthrough innovation slows.

Hire More AI Expertise and Strengthen Foresight in Governments and EU Institutions

EU Commission and Member States

★★★ Very urgent

★★☆ Mid

★★★ High

What: Build substantial in-house AI expertise within governments by recruiting technical experts with recent industry experience, complemented by generalists with strong track records in AI governance and foresight. This requires departing from standard civil service hiring: offering close-to-industry compensation, bypassing multi-month recruitment procedures, and centralising expertise in units with genuine authority rather than advisory mandates. The UK’s approach[32]—recruiting former OpenAI and DeepMind staff into the AI Security Institute within months, and appointing a Chief Technology Officer from industry as the Prime Minister’s AI Adviser—provides a viable template.

Why it matters: Governments often try to address lack of internal AI expertise by commissioning external advice. However, without sufficient internal knowledge, it’s difficult to ask the right questions or judge conflicting opinions. European governments currently lack adequate strategic awareness of frontier AI progress;[33][34] without accurate assessment of technological trajectories and their implications, even well-resourced actors may systematically make poor decisions. Old credentials in AI mean relatively little in this fast-moving field. A cautionary example: the Dutch government commissioned an analysis on AI and public values[35] that took its Scientific Council for Government Policy[36] 3.5 years to write, resulting in ‘Mission AI’[37]—a 500-page report released three weeks before ChatGPT that fails to mention large language models. The UK AISI, by contrast, rapidly attracted world-leading talent through unconventional means[38], while the EU AI Office mostly had to settle for more junior employees,[39] bottlenecked by bureaucracy and salary limitations.[40] Without technical experts who understand frontier systems firsthand, Europe cannot credibly evaluate AI risks, verify treaty compliance, or negotiate from strength—leaving it dependent on foreign institutions for the strategic intelligence needed to protect European interests.

Key considerations:

- Regulatory capture concerns require management. Hiring from companies you regulate creates perception issues; conflict-of-interest frameworks and cooling-off periods are essential.

- Expertise must be paired with decision-making authority to be effective.

- Security clearances create bottlenecks. Industry recruits typically lack the clearances required for sensitive national security work; obtaining them can take 6-18 months, conflicting with the need for speed.

Robustness: Critical across nearly all scenarios—essential in Takeoff and Arms Race where governments must assess risks and act decisively; vital in Diplomacy for designing verification and negotiating agreements; important in Big AI for regulating dominant providers; valuable even in Plateau for managing deployment, though stakes are lower.

Defragment AI Deployment Rules via 28th Regime or Mutual Recognition

EU Commission and Member States

★★☆ Urgent

★★★ High

★★☆ Mid

What: Harmonise vertical, sector-specific rules so that AI systems meeting common standards can be deployed throughout Europe. Address the patchwork of 27 different healthcare data regimes, education technology standards, financial services requirements, and public procurement processes. Two complementary mechanisms might be useful for this: 1) the 28th regime[41]—an optional EU-wide legal track that allows companies to bypass 27 national regulatory regimes without forcing Member States to abandon their own rules; and 2) mutual recognition frameworks where AI systems approved in one Member State are automatically valid in other Member States.

Why it matters: A genuinely harmonised Single Market creates one of the world’s largest unified economic zones—with roughly 440 million consumers and a GDP of approximately €18 trillion, the EU is currently the largest trading partner for the United States. This economic weight provides both direct leverage to negotiate terms of access for frontier AI capabilities and indirect normative influence through the EU regulation: the tendency for multinational companies to adopt EU regulations globally to avoid maintaining different standards for different markets. This is already seen in practice, with most leading AI companies voluntarily signing on to the EU GPAI Code of Practice, including OpenAI, Google, Microsoft, Amazon, Anthropic, and Mistral. While Europe has made progress with EU-level digital frameworks, the Draghi report[42] notes the market’s true potential remains constrained by fragmentation at the national level. This patchwork forces businesses to engage selectively with individual Member States, making it harder to scale. Critically, the fragmented Single Market also makes it less attractive for foreign companies to do business in Europe and lowers the opportunity cost for any actor contemplating AI access restrictions. As the Letta report[43] argues, there is much to gain by harmonising these rules.

This longstanding challenge gained concrete political momentum in January 2026 when President von der Leyen announced at Davos[44] that the Commission would “soon put forward” the 28th regime as “EU Inc,” enabling 48-hour online company registration with unified corporate rules across Member States. This commitment—backed by grassroots demand from the EU Inc initiative[45] representing 22,000+ signatories from Europe’s startup ecosystem—addresses foundational barriers in company formation. However, while this addresses foundational corporate establishment (company formation, investment terms, stock options), AI deployment still requires sector-specific mutual recognition frameworks for healthcare data regimes, financial services, education technology, and public procurement, which should be pursued in parallel rather than waiting for full EU Inc implementation.

Key considerations:

- National rules often reflect legitimate concerns, not mere protectionism. Healthcare data regimes exist because Member States have different privacy traditions and liability frameworks. Harmonisation that ignores these concerns will face sustained political resistance. The 28th regime could work precisely because it doesn’t force abandonment of existing national rules.

- Mutual recognition offers a faster path than full harmonisation. An AI system approved for healthcare deployment in Germany could be automatically recognised in France—no second approval process. This sectoral approach can deliver immediate benefits while the broader 28th regime negotiations proceed.

- Even partial progress (e.g., healthcare mutual recognition) could substantially strengthen Europe’s position

- Europe’s access to leading AI systems may prove contingent on securitisation dynamics—active diplomatic engagement and market-based leverage are essential

Robustness: Most valuable in Plateau and Big AI where widespread deployment and market power matter; unified market strengthens Brussels Effect and negotiating position with providers; useful in Takeoff for organisational readiness; moderate value in Arms Race where state backing matters more than market fragmentation; helpful in Diplomacy for collective leverage.

Expand Compute Infrastructure Through Special Compute Zones

EU Commission and Member States

★★★ Very urgentFigure 6: Categorisation of Cross-sectoral policy options relevant for AI by urgency (x-axis) and implementation type (y-axis). Source: CFG

★★☆ Mid

★★☆ Moderate

What: Designate Special Compute Zones[46] —specifically designated areas with streamlined rules for building and operating AI data centres and supporting on-site power infrastructure. Prioritise sites with existing energy infrastructure, such as to-be-decommissioned coal power plants[47] with robust grid connections.

Why it matters: Europe hosts only approximately 5% of global AI compute infrastructure,[48] while European AI companies often rely on American AI clouds for both training and inference.[49] Without domestic infrastructure, Europe cannot train competitive foundation models, run low-latency inference for real-time industrial applications, or ensure that sensitive government and commercial data remains under European legal jurisdiction.

The Commission has recognised this vulnerability. The Cloud and AI Development Act[50] sets an ambitious target: triple EU data center capacity within five to seven years. Special Compute Zones would be a useful tool to enable this buildout, because they directly address the most pressing bottlenecks cited by industry. The Commission’s own Call for Evidence identifies permitting timelines exceeding 48 months,[51] difficulties accessing energy and land, and fragmented national approaches as key obstacles. Special Compute Zones attack these directly: pre-approved sites with existing grid connections, single-window permitting that collapses approval timelines from years to months, and frameworks for co-locating clean energy generation.

Site selection should prioritise brownfield industrial areas, particularly decommissioned coal plants that already possess heavy-duty grid connections (500-1000 MW) and industrial zoning. Greece is already doing this[52]—redeveloping former lignite mines into data center and solar campuses. We recommend the Commission legislate this a a regulation rather than a directive to ensure direct applicability across all 27 Member States without the delays of national transposition. The European Chips Act[53] provides precedent: its Regulation-based fast-track permitting helped attract TSMC’s Dresden investment.

Key considerations:

- The planned AI Gigafactories (approximately 500,000 advanced chips total) remain significantly smaller than comparable US infrastructure—OpenAI alone expects to own over 1 million GPUs by end of 2025

- Special Compute Zones create optionality without over-commitment. They establish favourable conditions for private investment without requiring large upfront public expenditure—uptake depends on market demand, making this a lower-risk intervention than direct state investment in compute.

- Ownership models affect sovereignty differently. Commercial inference can run on foreign-owned data centres on European soil. Defence and intelligence workloads may require European or public ownership. The tiered security framework should map to corresponding ownership requirements.

- Behind-the-meter energy bypasses grid queues. Co-located generation (solar, wind, eventually SMRs) can sidestep multi-year waits for new grid connections. Special Compute Zone designation should explicitly enable and incentivise such configurations.

- Infrastructure requirements differ by workload: frontier training demands hyperscale, power-dense clusters; high-security uses require air-gapped facilities; low-latency inference calls for distributed regional capacity

- Governments should also commission detailed demand scenarios that take AGI seriously to determine appropriate levels of sovereign compute and public investment—investing too little amplifies dependencies, while investing too much could result in poor returns or political backlash.[54]

Robustness: Critical in Arms Race and Takeoff where sovereign compute becomes strategic necessity; essential in Big AI for alternatives to foreign cloud providers; important in Diplomacy for hosting secure verification infrastructure; valuable in Plateau for efficient deployment despite slower progress.

Future-Proof National Tax Bases

Member States

★★☆ Urgent

★☆☆ Low

★★★ High

What: Develop plans to address potential AGI-induced tax base erosion, anticipating the shift from labour to corporate income as automation increases, and the risk of services being powered by foreign companies that capture value outside European tax jurisdictions.

Why it matters: Even efficient governments with strong AI expertise will have limited capacity to steer the AGI transition if they face severe financial constraints. AGI’s potential to accelerate economic growth might seem to guarantee increased tax revenue, but two dynamics could erode tax bases instead. First, many future services may be powered by foreign companies (e.g., call centres replaced by voice agents from American AI companies), shifting value capture abroad. Second, if AGI leads to large-scale labour displacement, revenue shifts from labour to corporations—and since corporate taxes are often significantly lower than labour taxes in Europe[55], governments could end up with less revenue despite economic growth. This creates a perfect storm: governments needing to redistribute more resources to address unemployment while seeing the funds to redistribute drying up. As increasingly capable systems substitute for human labour across most tasks, wages collapse while returns to capital and control over AGI infrastructure surge, shifting income from workers to the owners of compute, models, and data. Wide joblessness and societal disruption could follow, as human labour retains little economic bargaining power. Over time, such an imbalance could hollow out the material foundations of political participation, making it increasingly difficult for democratic institutions to meaningfully constrain those who control AGI. This could push governments toward protectionist measures—mandating human workers in sectors where AI could perform tasks more efficiently, or imposing restrictions on AI adoption. While politically attractive as a way to preserve employment, such measures would make European companies less competitive against foreign rivals using AI freely, ultimately accelerating economic decline and deepening dependence on external powers who capture the productivity gains Europe forgoes. The geopolitical implications of potential labour market disruptions must be addressed in advance—no easy solutions seem to exist.[56]

Key considerations:

- Simply raising corporate taxes brings many unintended side effects and may drive companies elsewhere

- AI taxes could limit adoption and heighten tensions with foreign technology providers

- The problem must be addressed while labour markets are stable—responses during crisis will be constrained

- Protecting human jobs to maintain tax bases would lower growth and increase foreign vulnerability—a trap to avoid

Robustness: Critical in Takeoff where massive automation could collapse labour income; important in Big AI and Arms Race where significant displacement begins; useful in Diplomacy for managing coordinated transition; lower urgency in Plateau where automation remains limited and tax base relatively stable.

DEFEND: Securing European Digital Sovereignty

Europe’s AI strategy requires a difficult balance. Access to frontier foreign AI systems is essential for capturing productivity gains and remaining economically competitive. Yet dependence on systems developed abroad creates exposure: to commercial terms Europe cannot set, to priorities that may diverge in a crisis, to supply chains that have already become instruments of great power competition. This tension—between the benefits of access and the risks of reliance—defines the sovereignty challenge.

At the same time, AI is changing what Europe must defend against. Cyberattacks increasingly operate at machine speed,[57] outpacing human response. Synthetic media is scaling beyond human verification.[58] Dual-use capabilities diffuse through open models and scientific publication.[59] Despite research indicating AI’s impact on the cyber offence-defence balance could be multifaceted,[60] some evidence suggests cyber offensive capabilities are advancing faster than defensive ones.[61] Traditional cybersecurity approaches (reactive, human-paced, perimeter-focused) are increasingly mismatched to threats that are automated, scalable, and already inside the information environment.

The specific demands of these two imperatives shift across scenarios:

- In Plateau, capable but narrow systems remain easy to misuse for phishing and targeted manipulation—diffuse threats requiring broad societal resilience.

- In Big AI, concentration of capabilities in a few providers creates coercion risks, making access to critical AI systems a point of leverage.

- In Arms Race, where safety is deprioritised for speed, the probability of AI-enabled threats materializing rises substantially.

- In Diplomacy, verification becomes central, placing a premium on secure infrastructure that can support international monitoring.

- In Takeoff, the security of models themselves and training infrastructure becomes a first-order concern.

Economic competitiveness creates value, but only robust digital defence ensures autonomy. This pillar is a necessary counterpart to BUILD and the prerequisite for LEAD, as Europe cannot shape global norms from a position of vulnerability.

The seven options in this section address both dimensions of digital sovereignty: reducing dependencies that create vulnerabilities to coercion, and building defensive capacity against AI-enabled threats. Together, they aim to ensure Europe retains meaningful choice over how AI is developed and deployed within its borders.

Deploy AI-Powered Cyber Defence for Critical Infrastructure

Member States and EU agencies

★★★ Very urgent

★★★ High

★★☆ Moderate

What: Systematically develop and deploy sovereign AI-powered defensive capabilities across government networks, critical infrastructure, and key industries, using a tiered security framework that aligns sovereignty requirements with risk levels.

Why it matters: Today’s frontier models are increasingly capable of assisting with large-scale cyberattacks. In September 2025, Anthropic uncovered a large espionage campaign[62] in which attackers used Claude to infiltrate major global targets, achieving several successful breaches. Although the underlying attack techniques were familiar, the incident shows that AI agents are shifting from passive assistants to operational force multipliers capable of executing complex campaigns at scale. This trend is reflected in rapid performance gains on standard evaluation benchmarks,[63][64][65] which show surging capabilities and dropping costs.

However, these growing cyber-capabilities could also be used for defence — through automated anomaly detection, rapid vulnerability patching, and adaptive threat response. That said, defensive adoption is currently trailing offensive innovation,[66] and this gap is structural, not just technical. Attackers operate unconstrained: they face no procurement rules, no interoperability mandates, no liability frameworks. Defenders, by contrast, must navigate regulatory compliance, data protection requirements, and lengthy implementation cycles. This asymmetry means that even as AI raises the ceiling of what defence can achieve in principle, the defending side starts at a disadvantage in practice — making the urgency of AI-enabled defence greater, not lesser, than the offensive threat picture alone would suggest.This gap is especially risky for Europe, where a fragmented attack surface stretches across central institutions and member states with uneven levels of resilience. With ENISA[67] reporting a steady rise in large-scale attacks, relying solely on traditional security controls or foreign AI models is becoming increasingly untenable.

It is also worth acknowledging that deploying AI for cyber defence is not a neutral act. It raises legitimate sovereignty concerns — depending on which systems are used, by whom, and under whose oversight — and introduces a new attack surface in itself: an AI-powered defensive layer that is misconfigured, opaque, or compromised becomes a high-value target. This is why the architecture of AI cyber defence matters as much as its deployment.

To ensure its continued security, European countries should consider implementing tiered security frameworks that match sovereignty requirements to risk levels. For the most sensitive applications, Europe should demand full control of the development pipeline, from training data through deployment; for moderate-risk applications, adapting allied or open-source models with appropriate verification may suffice; for routine applications, commercial models with standard security measures could be appropriate. This proportional approach balances security with capability, avoiding both the trap of inferior but sovereign indigenous models and dangerous dependencies on opaque foreign systems.

Key considerations:

- Unified classification framework: Member States must establish a clear legal framework to define which applications require full indigenous control versus those suitable for allied or commercial models, eliminating dangerous ambiguity.

- Continuous red teaming: Cybersecurity requires permanent “red teaming” to stress-test defences, utilising specialised internal EU units for sensitive infrastructure and external contractors for routine applications — including adversarial testing of the AI defensive systems themselves.

- Regulatory fast lanes for defence: Given the regulatory asymmetry favouring attackers, the EU should explore streamlined approval pathways for AI-based defensive tools deployed in critical infrastructure, without compromising accountability.

- Collective perimeter defence: Since interconnected infrastructure makes the EU only as strong as its weakest link, larger Member States must share defensive models and resources with smaller nations to prevent cascading failures.

Robustness: Critical in Arms Race and Takeoff where AI-enabled cyber threats escalate dramatically; essential in Big AI where automated attacks scale beyond human response capacity; important in Diplomacy for maintaining security during verification regimes; valuable in Plateau for cost-effective defence against diffuse threats.

Build High-Security Data centres

Member states and coalitions

★★☆ Urgent

★★☆ Mid

★★★ High

What: Construct high-security AI data centres for intelligence, defence, and critical infrastructure applications. These facilities should meet stringent physical, cyber, and supply-chain security standards to prevent model weight exfiltration, data poisoning, and hardware compromise, and should support highly sensitive training, testing, and deployment workloads under full sovereign control or trusted shared governance arrangements.

Why it matters: To develop and deploy highly sensitive AI systems for intelligence, defence, and critical infrastructure, Member States need purpose-built, high-security AI data centres. Reliance on insecure or foreign facilities creates strategic vulnerabilities, since sensitive model weights could be exfiltrated, training data poisoned,[68] or hardware compromised at the chip level. The largest European Member States like France and Germany may hence consider investing in sovereign, medium-sized facilities meeting stringent security standards, equivalent to SL3 or SL4 on RAND’s security benchmark;[69] smaller Member States could secure access to such sites or pool resources to establish shared facilities under carefully designed governance structures.

Beyond sensitive AI development and inference, these secure facilities could also serve multiple strategic purposes, such as enabling isolated safety testing or acting as a compute reserve during crises. Furthermore, as AI governance frameworks mature, international agreements may require technical verification,[70] such as verifying model capability declarations and safety standards. Europe’s position outside the direct US-China competition, combined with its robust legal framework and leadership in regulatory standards (the “Brussels Effect”), positions it as a trusted institutional intermediary during periods of high mutual distrust between the major powers. High-security European data centres can serve as safe harbors for verification:[71] physically secure, legally transparent environments where US and Chinese models can undergo third-party auditing. By providing the infrastructure for verification—backed by strict EU data protection laws and hardware-level assurances—Europe can host the technical mechanisms necessary for global AI arms control and de-escalation.

Key considerations:

- Hardware-Level Governance: Security should begin at the hardware level, with Europe exploring procurement of secure processors that support on-chip governance.[72]

- Operational & Personnel Security: A cleared workforce and regular independent red teaming are essential to make such facilities a lasting capability.

- EuroHPC Integration: Secure facilities should complement the EuroHPC AI Factories,[73] serving as a restricted tier for national security workloads.

- Secure Resource Pooling: Research on federated learning and cross-border coordination[74] can help make shared resource pooling workable.

- Adaptive Security Standards: Security standards must adapt over time as threats evolve and capabilities improve.

Robustness: Essential in Arms Race and Takeoff where securing model weights and sensitive training becomes critical; valuable in Diplomacy for hosting neutral verification infrastructure; important in Big AI for sovereign alternatives to foreign cloud providers; useful in Plateau for protecting classified workloads.

Fund Research to Harden AI Models Against Jailbreaks and Misuse

Member states and EU agencies

★★★ Very urgent

★★☆ Mid

★★★ High

What: Invest in AI robustness and systemic safety to harden models, especially open-weight systems, against misuse, poisoning, and guardrail bypass, strengthening Europe’s security and resilience without resorting to restrictive governance.

Why it matters: AI-powered applications remain fragile when underlying systems lack guardrails. Current models suffer from significant vulnerabilities—including prompt injection, jailbreaking, and data poisoning—that allow malicious actors to circumvent refusal mechanisms or insert backdoors. The challenge is particularly acute for open-weight models, which are released with practically no effective defences against misuse: once weights are public, anyone can cheaply fine-tune away safety constraints entirely.[75] Recent research demonstrates that even a handful of poisoned documents can compromise a large model’s integrity,[76] and that most proprietary models’ guardrails can be bypassed with targeted attacks.[77] Such brittleness poses grave risks in cybersecurity and biosecurity, where the cost of exploitation is catastrophic, and is therefore essential to maintain a favourable offence-defence balance against AI-enabled threats.[78]

Moreover, it may not even be technically possible to fully prevent misuse of models whose weights are publicly available—fundamental questions[79] remain about whether safety can survive adversarial modification. Yet this makes the challenge more urgent, not less. Promising defensive approaches exist—including data filtering,[80] machine unlearning,[81] and tamper-resistant safeguards[82]—but all remain in their infancy, requiring substantial research investment to mature and validate. This represents a high-risk, high-reward opportunity: success would enable safer open-weight releases that preserve European digital sovereignty, while failure would force a choice between dangerous models or restrictive governance that increases foreign dependence.

Investment in AI robustness and systemic safety would serve a dual strategic purpose, protecting against emerging threats while reducing the pressure for restrictive governance. By building resilience against misuse, Europe can maintain access to and keep developing open-weight model—which are key to European digital sovereignty as they can be hosted, modified, and governed locally under EU law—without compromising security. Furthermore, Europe and other AI bridge powers are arguably more dependent on resiliency-enhancing technologies than the US or China: with far less control over frontier AI labs, cloud infrastructure, and core digital platforms, these nations cannot easily neutralise threats at their source, making technical resilience their most viable defence.

Key considerations:

- Shielding open source: Without robust defensive measures that can neutralise bad actors, the political pressure to ban open-source AI may grow.

- The offence-defence balance: Offence currently has a structural advantage in AI; funding must be disproportionately large to tip the balance, otherwise, defenders will remain permanently reactive.

- The safety-utility tradeoff: Excessive restriction and heavy censorship can undermine a model’s usefulness, causing it to reject legitimate requests and reducing its economic value.

Robustness: Critical in Plateau where open-weight models proliferate and misuse risks scale; essential in Arms Race where safety research is deprioritised; highly valuable in Takeoff for managing capable systems; enables cooperative safety work in Diplomacy scenarios; less urgent in Big AI where restrictions already limit open-weight access.

Strengthen Europe’s Semiconductor Leverage

Member states and EU Commission

★★☆ Urgent

★★☆ Mid

★★☆ Moderate

What: Recognise and protect Europe’s position as an indispensable partner in the global semiconductor ecosystem. Rather than pursuing manufacturing autonomy, Europe should safeguard the technologies that make it essential to global chip production—creating mutual dependence that naturally deters coercion. This requires three reinforcing capabilities[83]: comprehensive dependency mapping to understand where Europe’s value lies, targeted investment to protect existing strengths and secure future chokepoints, and coordination mechanisms that ensure Europe can respond collectively if chip access is threatened. To make this leverage credible, the EU should develop a spectrum of proportionate response options, ensuring it can signal resolve without being forced into either passivity or disproportionate escalation.

Why it matters: The threat to European chip access has fundamentally changed. When the Chips Act was drafted, the primary concern was accidental supply chain disruption, pandemics, droughts, facility fires. Today, threats are increasingly deliberate and strategic: China has demonstrated willingness to restrict exports for geopolitical purposes, while US policy increasingly prioritises American access. A strategy built around manufacturing capacity cannot address a world where semiconductors have become instruments of economic leverage.

Europe cannot win a subsidy race it cannot afford to enter. Reaching the 20% market share target would require investment exceeding €250 billion, and even then Europe would remain dependent on other countries for packaging, design IP, or raw materials. Strategic indispensability offers a more realistic path. Europe already provides irreplaceable contributions to global chip production: ASML’s lithography systems, Zeiss’s precision optics, TRUMPF’s high-power lasers, and imec’s pre-competitive R&D represent genuine value creation that the entire industry depends on. By ensuring these capabilities remain world-leading and anchored in Europe, the EU creates a situation where partners have strong incentives to maintain cooperative relationships, including continued European access to chips produced elsewhere.

However, strategic indispensability only deters coercion if Europe can credibly threaten to restrict access to its critical technologies when its interests are threatened. This requires establishing proportionate response mechanisms in advance. Rather than relying on blunt tools such as total export controls, Europe should develop a calibrated policy framework that allows graduated responses: limiting access to pre-competitive research programmes, restricting IP licensing, or conditioning maintenance and software updates for installed equipment. The specific measure depends on the nature and severity of the threat. This approach allows the EU to signal resolve and defend its interests without provoking further escalation, preemptive retaliation, or accelerated substitution by partners and competitors, positioning European firms as high-value enablers rather than coercive chokepoints.

Key considerations:

- Build situational awareness. As detailed in CFG’s Chips Act submission,[84] the Commission should map bidirectional dependencies—not just where Europe is vulnerable, but where global production depends on European technology. This requires mandatory data provision from key market actors and significantly expanded technical capacity at the Commission, which currently operates with a fraction of the resources the US allocated to CHIPS Act implementation.

- Protect and extend ecosystem leadership. The revised Chips Act should introduce a Strategic Semiconductor Asset designation for entities whose technologies are essential to global production. This should trigger tailored support: extended state-aid flexibility, automatic STEP “Sovereignty Seal” qualification, fast-track permitting, and streamlined IPCEI participation. The goal is ensuring these firms can continue to invest and innovate in Europe—not subsidising them to restrict sales.

- Anticipate the next generation of value creation. Beyond safeguarding existing advantages, Europe must also anticipate the erosion of current leverage as technologies mature. Strategic investment should therefore identify and target emerging control points such as advanced packaging, photonics, and edge inference hardware, allowing Europe to establish early leadership and sustain its role as an indispensable partner across future generations of the AI stack.

- Risk-sharing mechanisms: When European semiconductor companies face revenue losses due to geopolitically motivated export restrictions, an EU-level solidarity fund could compensate for the shortfall. This ensures that individual firms don’t bear disproportionate costs for collective European security interests and prevents commercial pressures from undermining strategic policy decisions.

- Collective decision-making: To make this leverage credible and prevent adversaries from pressuring individual member states, semiconductor policy decisions should shift from national to EU level under Qualified Majority Voting. This allows costs, risks, and benefits to be shared across the bloc whilst strengthening Europe’s negotiating position with external powers.

Robustness: Most valuable in Arms Race and Big AI where chokepoint control provides negotiating power against coercion; important in Diplomacy for coordinated export controls; useful in Takeoff for influencing compute allocation; maintains strategic options in Plateau.

Decrease Dependencies in Energy, Materials and Security

Member states and EU Commission

★★☆ Urgent

★☆☆ Low

★★★ High

What: Systematically reduce strategic vulnerabilities in energy, security, and materials that limit Europe’s ability to wield its technological advantages.

Why it matters: Europe’s semiconductor ecosystem provides genuine strategic value, but this position means little if adversaries can retaliate through other channels. Europe depends heavily on external suppliers across critical domains: Chinese manufacturing inputs and materials processing, American security guarantees and LNG supplies, and until recently, Russian fossil fuels. As CFG’s Chips Act submission[85] notes, such dependencies “fundamentally limit how assertively Europe can wield its technological advantages.” For instance, leveraging semiconductor chokepoints becomes less credible if partners can respond through restrictions on energy supplies, critical materials, or security cooperation.

Beyond strategic leverage, energy constraints directly limit Europe’s AI capacity. The Draghi report identified energy costs as a key competitiveness constraint:[86] in 2023, industrial electricity prices in the EU were 158% higher than in the United States, and industrial gas prices 345% higher. This largely reflects Europe’s reliance on imported fossil fuels, a dependency made acute by the Russian invasion of Ukraine. Unlike the mostly integrated continental grids of the US and China, Europe’s energy system remains a collection of separate national grids with cross-border bottlenecks and divergent national regulations, complicating the large-scale, predictable power capacity that massive data center clusters require. Heavy bureaucracy is another blocker: permitting and grid-connection timelines for new power plants in Europe routinely run 5–9 years, while China is investing in new energy projects at a pace and scale unmatched by the US and Europe.[87]

As AI capabilities advance, cheaper and more abundant energy could translate to greater available intelligence, giving regions with more energy capacity an edge in developing and adopting transformative AI systems; if these energy and infrastructure constraints persist, Europe risks seeing its AI ambitions capped not by talent or research strength, but by the basic physical limits of power availability, cost, and speed of deployment.

Critical materials present a parallel vulnerability. The transition to AI-intensive infrastructure requires rare earth elements, lithium, cobalt, and other materials where China dominates processing capacity. Europe cannot claim strategic autonomy while remaining dependent on a single supplier for inputs essential to everything from data center construction to renewable energy deployment.

Key considerations:

- The cost of autonomy: Reducing dependencies will likely keep consumer prices and inflation higher in Europe than in the US or China; leaders must be honest with the electorate that strategic sovereignty carries an economic premium.

- Diversify materials supply chains. Securing materials like cobalt, lithium, and rare earths without dependence on China requires the Global Gateway[88] initiative to deliver tangible results, offering African and South American partners a better value proposition than China’s Belt and Road. Europe should also invest in domestic processing capacity and strategic reserves of materials critical to AI infrastructure.

- Complete the energy union: The current fragmentation of energy markets makes power artificially expensive; implementation must prioritise cross-border interconnectors and a unified regulatory regime to allow surplus energy to flow frictionlessly.

- Fast-tracking firm power: Data centres need constant power, and renewables cannot supply it without huge storage. Meeting demand may require politically tough moves to fast track permits for firm low carbon sources like Small Modular Reactors and geothermal, placed near AI compute zones.

Robustness: Critical in Arms Race where dependencies limit diplomatic autonomy; essential in Big AI for competing on infrastructure buildout; important in Takeoff for sustained compute capacity; valuable across Plateau and Diplomacy for reducing vulnerability to external pressure.

Invest in Collaborative AI Technologies and Public AI Competency

Member states and EU Commission

★★☆ Urgent

★★☆ Mid

★★☆ Moderate

What: Fund the development and deployment of collaborative AI technologies that strengthen democratic participation and societal resilience. This includes: (1) AI-assisted deliberation platforms that surface consensus rather than amplify division (modeled on Taiwan’s vTaiwan/Polis system[89]) accompanied by training programmes for government “Participation Officers” to facilitate these consultations, (2) community-driven verification systems where users collaboratively add context to potentially misleading content, with contributions validated by those who historically disagree, (3) synthetic media detection tools for institutions such as law enforcement, courts, media outlets, and election authorities, and (4) public programmes that develop active AI competency—the ability to effectively use, evaluate, and participate in shaping AI systems—across all age groups, delivered e.g. through public libraries, community centres, and broadcasters.

Why it matters: The world has entered an era where the volume of AI-generated content is surpassing human creation,[90] a shift that fundamentally alters the texture of our information ecosystem. As text, image, and video models advance in stride, their ability to imitate reality has outpaced our capacity to discern it: recent research confirms that language models have effectively passed the Turing test[91]—successfully fooling human evaluators into believing they are conversing with another person in short interactions— while human ability to identify synthetic media remains only marginally better than chance.[92] The implications of this saturation are immediate and diverse: from the proliferation of spam, targeted scams, and non-consensual pornography to the erosion of public trust in media and the legal necessity of verifying content authenticity. As shared consensus on what constitutes reality fragments, AI-generated content can deepen societal polarisation—enabling precisely targeted disinformation campaigns that reinforce existing beliefs while undermining common ground for democratic deliberation. Future AI assistants, integrated into daily life to manage tasks, could be weaponised to subtly influence emotions and behaviours, enabling fraud or subverting democratic processes with personalised precision.

However, purely top-down technical solutions are insufficient and can actively backfire. Digital watermarking remains fragile and easily circumvented.[93] Heavy-handed content moderation and surveillance-based detection systems often fuel polarization by appearing partisan or culturally biased, while raising serious privacy concerns through monitoring of private communications. When technical safeguards are seen as tools of censorship rather than protection, they undermine rather than strengthen democratic resilience. What works is a three-layered approach combining: technical detection capabilities, active public competency, and mechanism design that harnesses collective intelligence. Taiwan demonstrated this at scale: the Polis platform enabled consensus-building across 26 national issues,[94] with 80% leading to government action.[95] Community-driven verification systems (where users collaboratively add context, with contributions validated by those who historically disagree) create trust through distributed reputation rather than centralized authority.

Europe must move beyond passive AI literacy toward active AI competency—equipping citizens not just to identify deepfakes, but to participate in shaping how AI systems operate in society. Finland’s model of training children as young as six[96] to identify misinformation demonstrates effectiveness, but the current approach leaves adults underserved.

Key considerations:

- Open-source requirement: Mandate funded platforms use open-source code (like Polis[97]) enabling Member State adaptation and preventing vendor lock-in.

- Integration with formal processes: Connect deliberation platforms to legislative processes with mandatory government responses when proposals reach consensus thresholds. Taiwan’s JOIN platform achieved over 50% population engagement by creating genuine policy influence, not standalone consultations.

- The privacy trade-off: Effective client-side detection must navigate the fine line between protecting users from manipulation and building a surveillance architecture that scans private correspondence.

- Healthy skepticism: The goal of education is skepticism, not cynicism: if the public AI literacy campaigns makes citizens believe nothing is real, trust in democratic institutions collapses.

Robustness: Most urgent in Plateau and Big AI where synthetic content scales with widespread model deployment; useful in Arms Race where information warfare intensifies between competing powers; important in Diplomacy for maintaining information integrity and trust; essential in Takeoff for preserving human ability to verify reality.

Cultivate Economic Indispensability Through AI-Ready Industries

Member states and EU Commission

★★☆ Urgent

★★☆ Mid

★★☆ Moderate

What: Identify and invest in European industries that sit at critical bottlenecks between AI capabilities and real-world impact— advanced manufacturing capacity that converts AI designs into physical products; industrial robotics and automation systems that execute AI instructions; proprietary industrial datasets that AI needs to optimise production; quality assurance and certification infrastructure that validates AI outputs; and specialized physical infrastructure (testing facilities, production equipment, semiconductor fabrication tools) that cannot easily relocate. As AI becomes cheaper and more abundant, what becomes scarce is not intelligence itself, but the capacity to deploy it. Make these sectors AI-compatible through automated production lines, comprehensive real-time data collection, digitised workflows, and reduced regulatory friction. When European industries become essential partners for AI-driven growth rather than mere customers, Europe gains natural leverage.

Why it matters: Europe is significantly behind in frontier AI development and risks becoming a pure consumer of AI services with no leverage over terms of access. Pure consumers can be cut off, charged premium prices, or subjected to conditions they cannot negotiate. Partners with something essential to offer cannot be treated the same way.

This opportunity exists across Member States, though unevenly. Germany and Italy possess substantial industrial capacity that AI systems will need to interface with. The Netherlands and Belgium anchor semiconductor supply chains. France and the Nordics lead in specialised manufacturing and digital infrastructure. Smaller Member States may lack scale individually, but can position themselves on specific bottlenecks—precision components, specialised materials, testing and certification services—where their capabilities are difficult to replicate quickly.

The EU’s Apply AI Strategy[98] represents an important first step, mobilizing €1 billion to accelerate AI adoption across eleven key sectors including healthcare, manufacturing, energy, and mobility. It also funds critical deployment infrastructure including AI-powered digital twins for manufacturing optimisation, digital twins for climate resilience and urban planning, and sector-specific AI models. Yet as frontier AI development shifts from scraping public internet data toward specialized datasets requiring tacit knowledge—the kind embedded in experienced engineers’ intuitions, shop-floor workers’ expertise, and proprietary industrial processes—Europe’s real competitive advantage may lie not in deployment alone, but in systematically collecting and digitising this industrial knowledge. Current approaches to data remain largely passive: companies accumulate operational data but rarely structure it for AI training. What’s needed is active data curation—transforming fragmented industrial datasets into interactive reinforcement learning environments where AI systems can learn from Europe’s accumulated industrial expertise. This requires moving beyond simply using AI in factories to deliberately capturing the knowledge that makes those factories work: quality control heuristics, failure mode diagnostics, optimisation rules that experienced operators apply intuitively. Apply AI primarily focuses on deployment within end-user industries rather than strengthening the intermediary value chain that makes deployment possible. For example, while Apply AI might fund AI-powered healthcare screening centres, it doesn’t adequately address who builds the underlying diagnostic platforms, middleware, and data pipelines that make such centres possible. Similarly, manufacturing initiatives focus on adoption but not on developing European providers of industrial AI orchestration software, robotic control systems, or digital twin platforms.

The political economy of this approach is favourable. Unlike strategies that require visible pivots to AI-centric economic models, strengthening existing industrial sectors looks like doubling down on national strengths. It benefits incumbent industries and existing workforces rather than disrupting them, making it easier to build the domestic coalitions needed for implementation.The alternative is uncomfortable for countries that fail to find such positions: too small to develop frontier models, too peripheral to attract sustained AI investment, and too dependent to negotiate favourable terms.

Key considerations:

- Identify national bottlenecks: Each Member State should map sectors that sit between AI capability and real-world value creation, including advanced manufacturing, robotics, industrial data assets, quality assurance infrastructure, and physical production capacity that cannot easily relocate.

- Prioritise AI-compatibility: Policy should focus on making bottleneck sectors ready to absorb AI-driven efficiency gains: automated production lines, comprehensive real-time data collection, digitised workflows, and reduced regulatory friction for AI deployment.

- Coordinate for collective leverage: Individual Member States lack scale; the EU should facilitate pooling of industrial capacity, shared data infrastructures, and coordinated positioning in negotiations with AI powers, ensuring smaller states benefit from collective bargaining power.

- Think in months, not decades: The window for establishing strategic positions narrows as AI capabilities advance; policy should prioritise near-term competitiveness gains over long-horizon transformation programmes.

- Complement, don’t replace, chokepoint strategy: This approach works alongside semiconductor leverage: chokepoints provide defensive tools against coercion, while economic indispensability creates positive incentives for cooperation. Both are needed for a resilient strategic position.

Robustness: Most valuable in Big AI where it prevents pure consumer dependence on dominant providers; important in Arms Race for maintaining leverage with AI powers; useful in Plateau for capturing deployment value; provides negotiating position in Diplomacy; less relevant in Takeoff where rapid automation may bypass traditional industrial partnerships.

LEAD: Shaping Global AI Governance

Europe is unlikely to lead this decade by outspending the United States or China’s frontier model developers. Capital requirements are too large, the industrial base too small, and talent too concentrated elsewhere. But influence over AI does not flow exclusively from building the most powerful systems. It also flows from setting the terms on which AI is developed, deployed, and governed—and here Europe has distinctive assets to exercise leadership.